Nvidia, the undisputed leader in graphics processing units (GPUs) and a pivotal enabler of the artificial intelligence revolution, commenced its highly anticipated annual GTC developer conference in San Jose, California, on Monday, March 16. The event, running through March 19, is set to be headlined by CEO Jensen Huang’s keynote address, scheduled for 11 a.m. PT / 2 p.m. ET. This year’s GTC, officially the GPU Technology Conference, arrives amidst unprecedented attention on Nvidia, whose technology forms the backbone of modern AI development, propelling the company to a trillion-dollar valuation and cementing its status as a critical player in the global technology landscape.

The Marquee Event: Jensen Huang’s Keynote and GTC’s Evolution

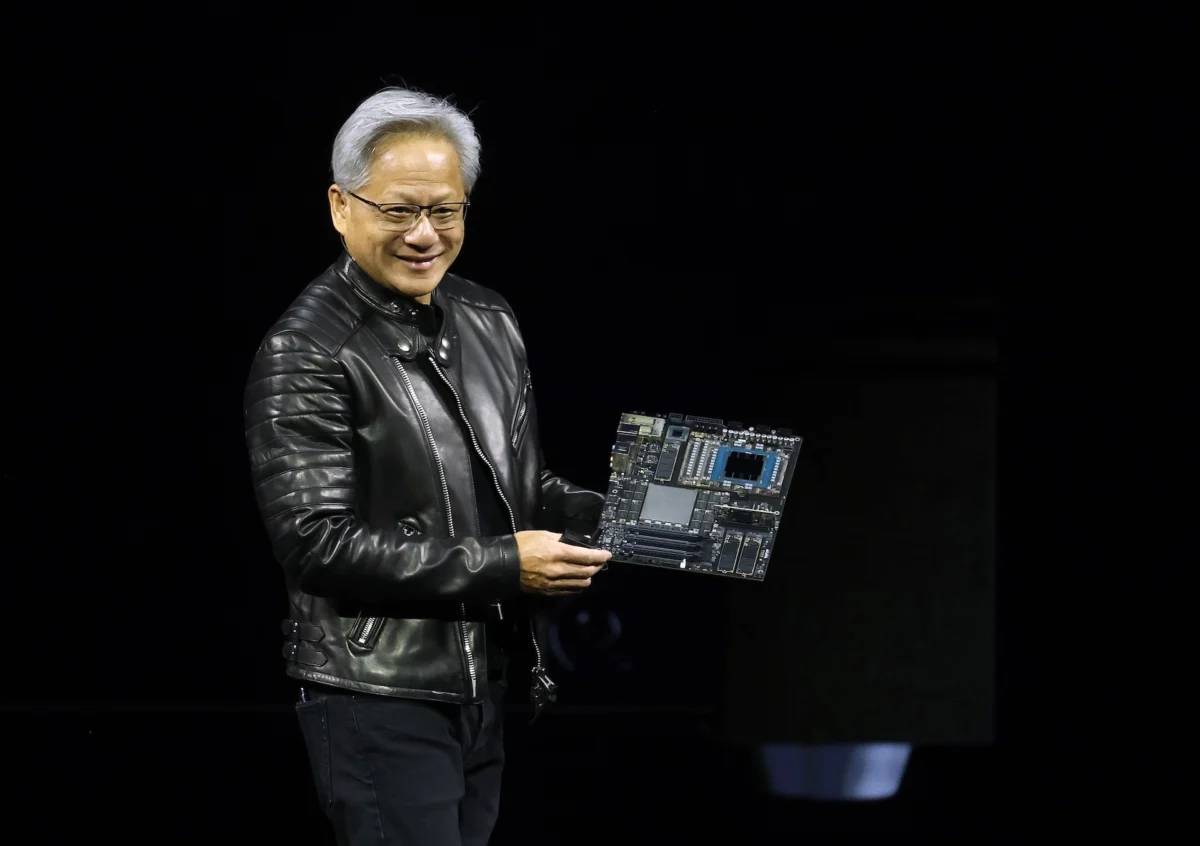

Jensen Huang’s two-hour keynote is traditionally the most closely watched segment of GTC, serving as Nvidia’s primary platform to unveil groundbreaking products, announce strategic partnerships, and articulate its expansive vision for the future of computing and artificial intelligence. Attendees have the option to witness this pivotal address in person at the SAP Center in San Jose or join a global audience by livestreaming the talk directly from the event’s official website or via the embedded YouTube broadcast.

GTC itself has evolved dramatically since its inception in 2009. Initially conceived as a forum for GPU computing, the conference has transformed into the world’s premier AI developer event, mirroring Nvidia’s strategic pivot and overwhelming success in the AI domain. This year’s four-day agenda is packed with over 900 sessions, workshops, and expert panels, delving into the latest advancements and future trajectories of AI across a multitude of industries. From pioneering breakthroughs in healthcare and drug discovery to the complex challenges of robotics, autonomous vehicles, and scientific research, GTC 2026 promises to offer a comprehensive look at how AI is reshaping every sector of the global economy. The sheer breadth of topics underscores the ubiquity of Nvidia’s influence, with its CUDA platform and GPU architectures providing the foundational computational power for innovators worldwide.

Anticipated Hardware Breakthroughs: Dominating the Inference Frontier

While Nvidia currently commands an estimated 80% market share in the AI training market – the computationally intensive process of teaching AI models to recognize patterns and make decisions – the company is widely rumored to be making a significant push into the AI inference market. Inference, the process by which a trained AI model applies its learned knowledge to generate real-time responses or predictions, represents the operational phase of AI. It requires immense efficiency, speed, and cost-effectiveness to scale AI applications across vast user bases and diverse enterprise environments.

The Wall Street Journal previously reported on Nvidia’s plans to release a new chip specifically designed to accelerate AI inference. This rumored hardware innovation is seen as Nvidia’s latest strategic move to extend its dominance beyond the training phase, where its Hopper and future Blackwell architectures are industry standards, into the high-growth inference segment. Faster and cheaper inference capabilities are widely recognized as one of the last major bottlenecks preventing the broad scaling and commercialization of AI applications. A dedicated inference chip from Nvidia would not only solidify its leadership but also intensify competition with major tech players like Google, Amazon, and Microsoft, all of whom are developing custom application-specific integrated circuits (ASICs) to power their own AI inference workloads and cloud services. Nvidia’s potential entry into this specialized inference hardware market could dramatically alter the competitive landscape, offering enterprises a powerful, optimized solution that leverages Nvidia’s extensive software ecosystem and developer tools, thereby accelerating the deployment of AI at the edge and in data centers globally.

Strategic Software Initiatives: Ushering in the Era of AI Agents with NemoClaw

Beyond hardware, Nvidia is also expected to make significant announcements on the software front, particularly in the burgeoning field of AI agents. Wired magazine previously reported on the anticipated release of "NemoClaw," an open-source platform designed for enterprise AI agents. AI agents are sophisticated software programs capable of autonomously carrying out multi-step tasks, learning from interactions, and adapting their behavior over time. They represent a significant leap forward in AI capabilities, moving beyond single-task automation to more complex, intelligent workflows.

NemoClaw, if launched as rumored, would provide businesses with a structured and standardized framework to build, deploy, and manage these advanced AI agents. This strategic move would position Nvidia to directly compete with, or at least mirror, similar offerings from companies like OpenAI, which are also investing heavily in developing agentic AI capabilities for enterprise use. By embracing an open-source model, Nvidia could foster a vibrant developer ecosystem around NemoClaw, encouraging widespread adoption and customization. This approach aligns with Nvidia’s long-standing strategy of building comprehensive platforms – combining powerful hardware with accessible software – to accelerate innovation. The introduction of NemoClaw would underscore Nvidia’s commitment to moving further up the AI software stack, providing end-to-end solutions that simplify the integration of complex AI functionalities into enterprise operations, from automated customer service and data analysis to advanced robotics control and supply chain optimization.

Ecosystem Expansion and High-Profile Partnerships: The Groq Factor

GTC is historically a nexus for partnership announcements, showcasing Nvidia’s collaborative efforts across its vast ecosystem. This year, particular attention is focused on Nvidia’s relationship with Groq, an AI chip challenger known for its ultra-low-latency Language Processing Unit (LPU) inference engine. TechCrunch reported late last year that Nvidia reportedly paid $20 billion to license Groq’s technology, a deal that also saw Groq’s founder, Jonathan Ross, president, Sunny Madra, and other key team members transition to Nvidia.

The integration of Groq’s technology and talent into Nvidia is a subject of intense industry curiosity. Groq’s LPU architecture is engineered for unprecedented inference speed, particularly for large language models (LLMs), which could significantly bolster Nvidia’s efforts to dominate the inference market. Analysts like Kevin Cook, a senior equity strategist at Zacks Investment Research, have highlighted the importance of GTC in clarifying Nvidia’s plans for this licensed technology. The strategic implications are vast: it could equip Nvidia with an even more formidable array of inference solutions, potentially combining the best aspects of Groq’s specialized LPU with Nvidia’s broader GPU capabilities. This tie-up could allow Nvidia to address a wider spectrum of inference workloads with optimal efficiency, further insulating its market position against emerging competitors and reinforcing its status as the go-to provider for AI infrastructure. The integration of Groq’s expertise could lead to hybrid architectures or specialized product lines that unlock new levels of performance for real-time AI applications, making this partnership a critical point of interest at GTC 2026.

Nvidia’s Dominance in a Booming AI Market: Background and Context

Nvidia’s trajectory has been nothing short of meteoric, driven by the insatiable global demand for AI. The company, founded in 1993, initially rose to prominence as a pioneer in graphics processing for gaming. However, its foresight in recognizing the parallel processing capabilities of GPUs for general-purpose computing, particularly for complex mathematical operations central to machine learning, transformed it into the "picks and shovels" provider for the AI gold rush. The introduction of its CUDA programming model in 2006 effectively unlocked the GPU’s potential for scientific computing and, subsequently, AI research.

Today, Nvidia’s H100 and A100 GPUs are the workhorses of virtually every major AI lab, cloud provider, and enterprise deploying AI. This market dominance is reflected in Nvidia’s staggering financial performance, with quarterly revenues consistently exceeding expectations and its market capitalization soaring past the trillion-dollar mark, making it one of the most valuable companies globally. The company’s growth is directly tied to the exponential expansion of the AI market, which is projected to reach hundreds of billions of dollars in the coming years, fueled by advancements in generative AI, autonomous systems, and personalized intelligence. Nvidia’s strategic investments in software platforms like CUDA, cuDNN, and TensorRT, alongside its hardware innovations, have created a powerful, self-reinforcing ecosystem that is difficult for competitors to replicate. GTC 2026 is therefore not just a product launch event; it is a declaration of Nvidia’s ongoing commitment to shaping the future trajectory of artificial intelligence.

Broader Implications and Forward Outlook

GTC 2026 is poised to be a pivotal moment not only for Nvidia but for the entire AI industry. The rumored announcements in both hardware and software underscore Nvidia’s aggressive strategy to maintain and expand its leadership in a rapidly evolving and increasingly competitive landscape. If the company successfully delivers on its promises for a dedicated inference chip and an open-source AI agent platform like NemoClaw, the implications will be far-reaching.

For Nvidia, these innovations would solidify its position as an indispensable full-stack AI provider, offering solutions that span from foundational computing power to advanced application development. This diversification would mitigate risks associated with over-reliance on the training market and open up vast new revenue streams in the enterprise AI and edge computing sectors. For the broader AI industry, Nvidia’s advancements promise to accelerate the adoption and deployment of AI technologies across various sectors. Faster, cheaper inference capabilities will make AI more accessible and practical for real-world applications, while standardized platforms for AI agents will empower businesses to build more sophisticated and autonomous systems. This will inevitably intensify the race among chipmakers and software developers to deliver superior AI solutions, driving further innovation and competition.

As thousands of developers, researchers, and industry leaders convene in San Jose and millions more watch online, GTC 2026 stands as a testament to the transformative power of AI and Nvidia’s central role in driving its progress. The announcements made this week will undoubtedly set the tone for the next phase of AI development, shaping how enterprises leverage intelligent automation, how autonomous systems interact with the physical world, and how groundbreaking scientific discoveries are made possible by advanced computational power. The stakes are incredibly high, and the world is watching to see how Nvidia will continue to redefine the boundaries of what’s possible with AI.