The digital landscape, increasingly permeated by sophisticated artificial intelligence, recently witnessed a stark illustration of AI’s current limitations when a seemingly innocuous children’s word search puzzle, posted on Reddit, challenged the collective intelligence of the internet and quickly exposed what many users believe to be the pitfalls of unchecked AI-generated content. The puzzle, an Easter-themed graphic intended for children, appeared to defy its fundamental purpose by containing no discernible findable words from its accompanying list, leading to widespread suspicion that it was hastily generated by AI and published without any human editorial review. This incident has ignited a crucial discussion among digital publishers, content creators, and the general public about the integrity of AI-produced material and the indispensable role of human oversight in maintaining quality and trust.

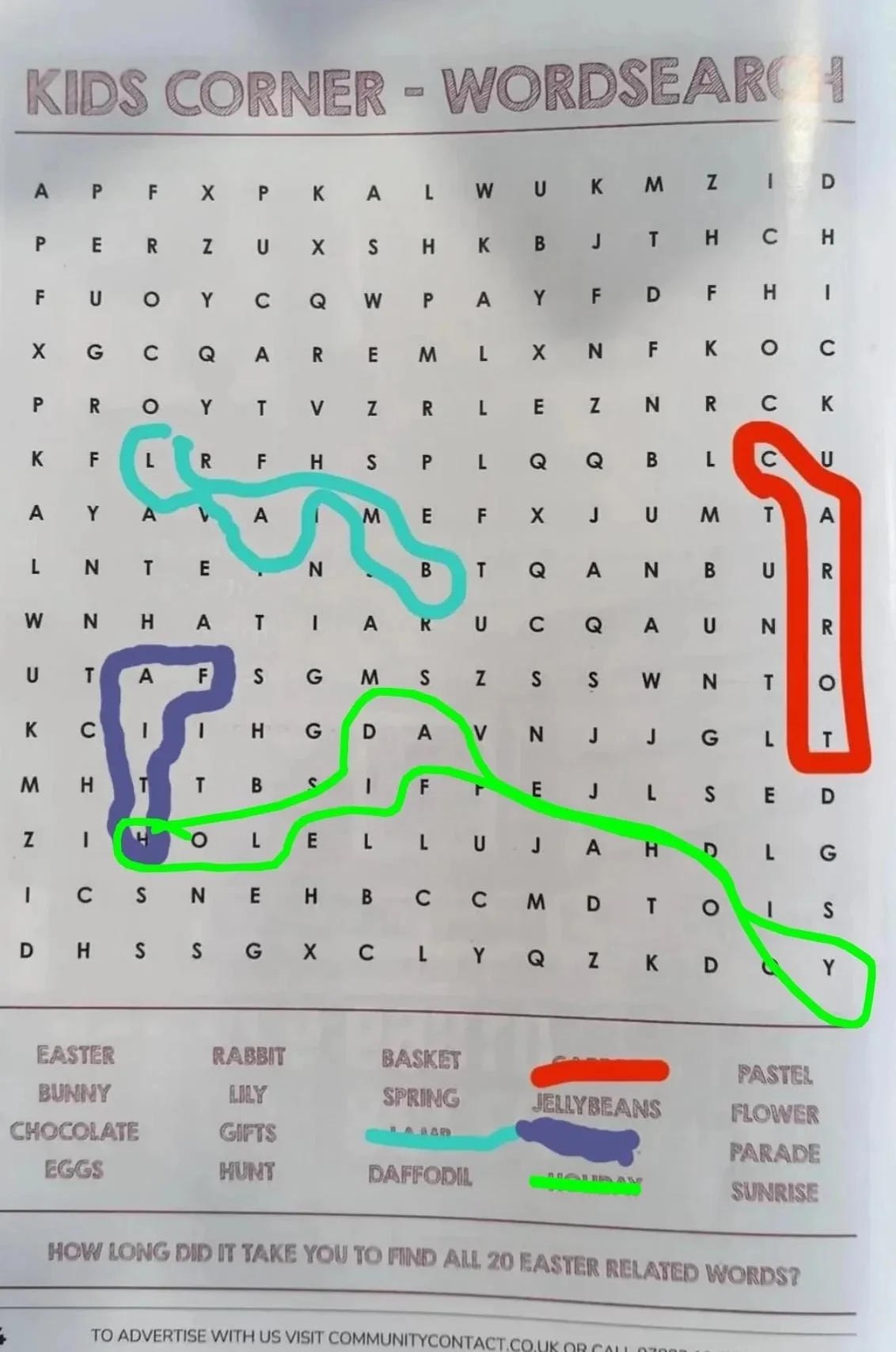

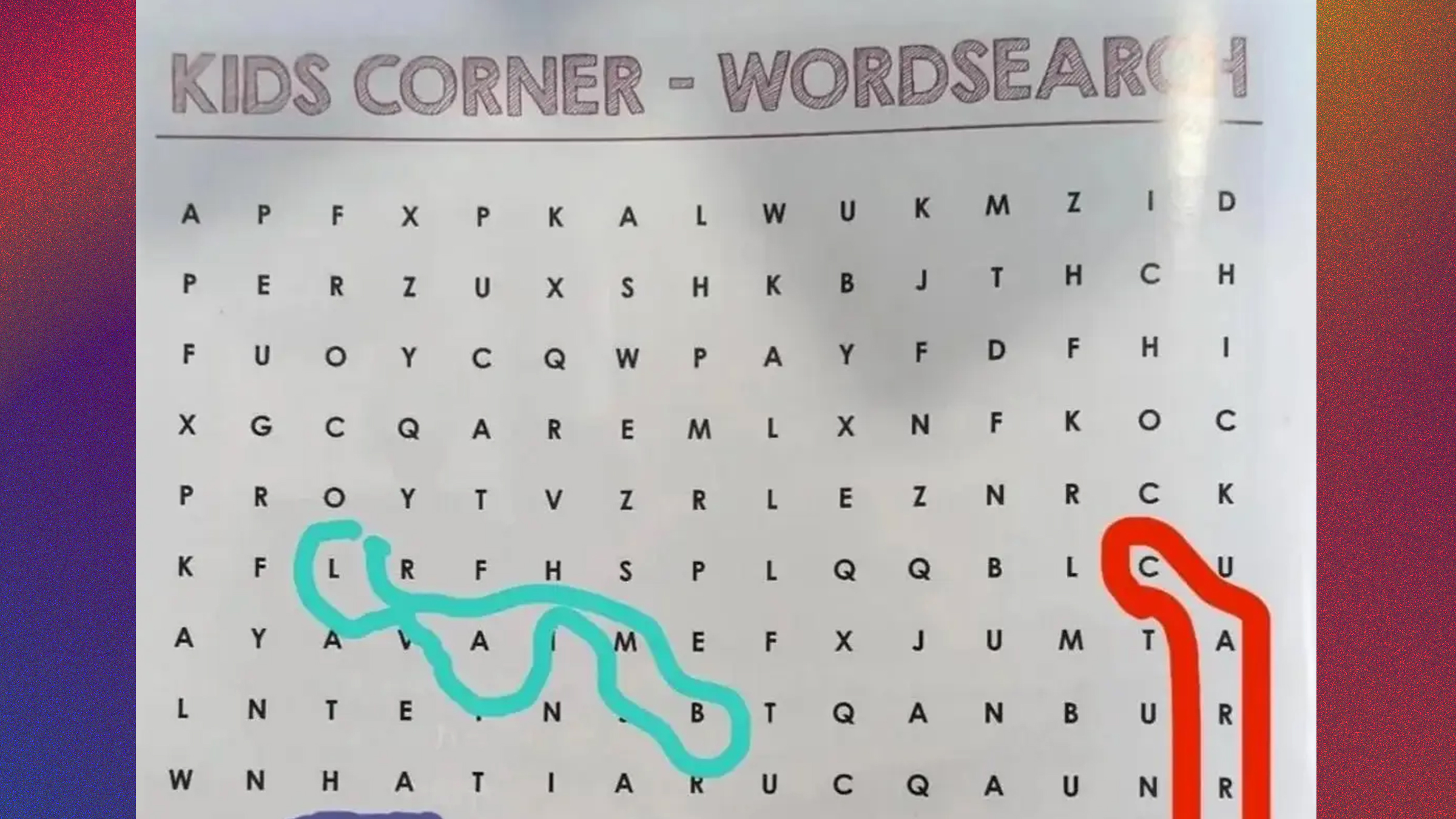

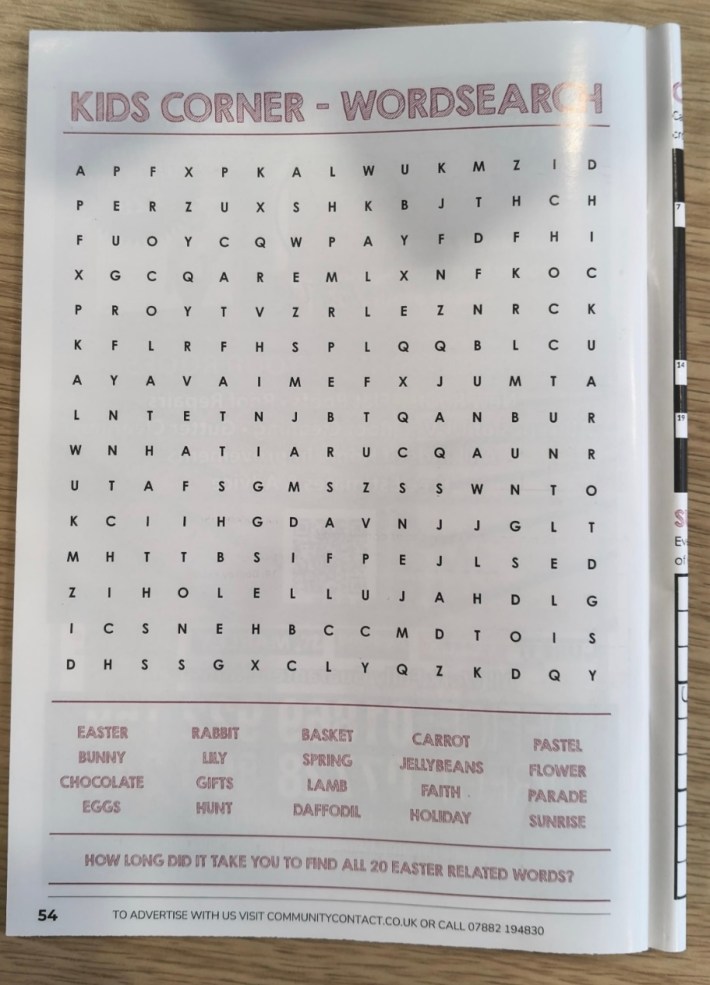

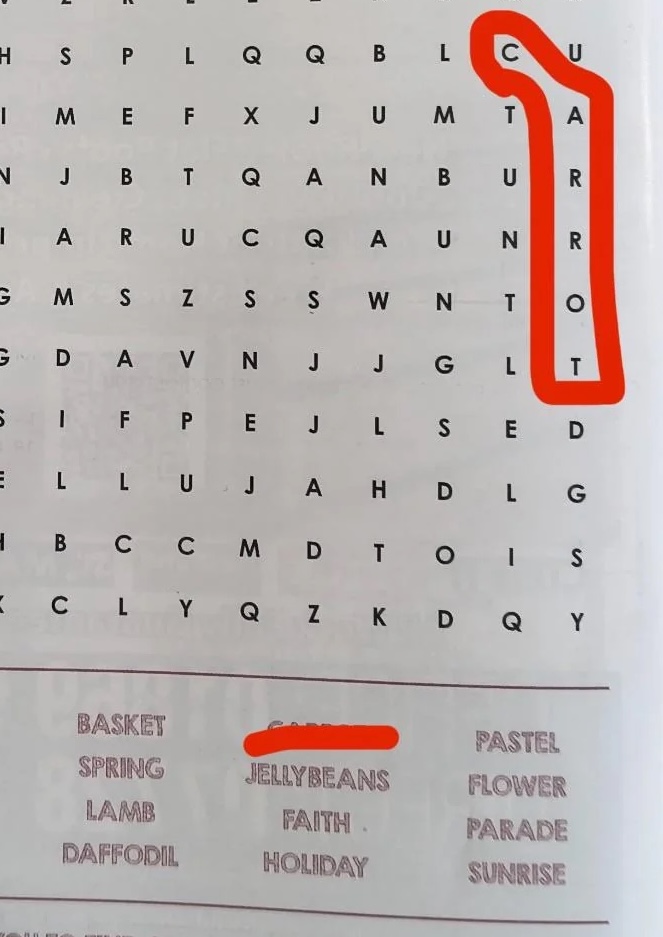

The catalyst for this viral phenomenon was Reddit user u/XcuseMeWat, who on [Insert specific date or "a recent date" if not available, otherwise infer March/April due to "Easter-themed" and "2026/03" in image URLs], shared an image of the perplexing word search. Accompanying the post was a direct challenge: "I defy you to find a single word in this ‘kids’ word search." The sentiment captured the immediate frustration and bewilderment of anyone attempting the puzzle, which, despite listing common Easter-related terms such as "BUNNY," "EGG," and "CHOCOLATE," presented a grid of letters that seemingly contained none of them. The post rapidly gained traction, accumulating over 11,000 upvotes and generating more than 3,000 comments as users from across the globe converged to tackle the seemingly impossible task.

The Unsolvable Riddle: A Community’s Collective Effort

The Reddit thread quickly transformed into a collaborative, albeit exasperated, problem-solving session. Users, initially approaching the puzzle with earnest attempts to locate the listed words, soon resorted to increasingly creative and often humorous strategies. Some users, determined to find something, confessed to "breaking every rule" of traditional word search solving. This included searching diagonally where not permitted, reversing word directions beyond standard conventions, or even forcing interpretations of letter clusters into approximations of the target words. The futility of these efforts only reinforced the growing suspicion that the puzzle was fundamentally flawed.

Many users began to share screenshots of their attempts, circling accidental, unlisted words they stumbled upon by chance, or humorously drawing circles around entirely made-up sequences of letters. For instance, one user might highlight "CAT" or "DOG" even though these words were not on the official list, simply because they existed within the jumbled grid. Another might playfully circle "NONSENSE" or "FRUSTRATION," reflecting the prevailing mood of the thread. This collective struggle underscored the core issue: a children’s puzzle, designed for straightforward engagement, was instead proving to be an exercise in digital futility, bordering on absurdity.

The Inevitable Conclusion: AI’s Unchecked Hand

As the discussion progressed, a consensus rapidly formed among the Reddit community regarding the origin of the defective puzzle. The overwhelming majority of users pointed to artificial intelligence as the likely culprit. One highly upvoted comment succinctly articulated the sentiment: "AI made, and published without any checks." This conclusion was not reached lightly but was based on observed patterns consistent with common AI "hallucinations" or errors in content generation, particularly when dealing with tasks that require logical consistency and adherence to specific rules.

Unlike human-designed puzzles, which are meticulously crafted to ensure solvability and a specific distribution of words, AI-generated puzzles, especially those created without explicit constraints or robust validation layers, can often fail spectacularly at these fundamental requirements. The random, chaotic arrangement of letters, devoid of the intended hidden words, became a digital fingerprint of an automated process gone awry, highlighting AI’s current limitations in generating contextually relevant and functionally correct content without human oversight.

Background: The Proliferation of Generative AI

The incident occurs against a backdrop of the exponential rise of generative AI tools. Over the past few years, platforms like OpenAI’s ChatGPT, Google’s Bard (now Gemini), and various image generation models such as Midjourney and DALL-E have democratized access to AI capabilities, enabling individuals and organizations to produce vast amounts of text, images, and even simple game elements with unprecedented speed. This technological leap has revolutionized content creation workflows, offering the promise of increased efficiency, reduced costs, and rapid scalability.

Industries across the board, from marketing and journalism to education and entertainment, are actively exploring or implementing AI into their content pipelines. Market research firms project significant growth in the AI content generation market, with some estimates suggesting a compound annual growth rate (CAGR) exceeding 25% in the coming years. This rapid adoption is driven by intense pressure to produce continuous streams of fresh content for websites, social media, educational materials, and even supplementary media like the word search in question. The allure of AI’s ability to automate tedious or time-consuming tasks is undeniable, making it an attractive solution for publishers operating under tight deadlines and budget constraints.

However, as the Reddit word search incident vividly demonstrates, the enthusiasm for AI must be tempered with a critical understanding of its current limitations. While AI excels at pattern recognition, data synthesis, and creative generation within certain parameters, it often struggles with tasks requiring true logical reasoning, nuanced understanding of context, or adherence to complex, implicit rules – precisely the attributes necessary for creating a functional puzzle.

A History of Digital Puzzles vs. AI’s Current Capabilities

The irony of the AI-generated word search’s failure is amplified by the fact that functional, computer-generated word searches have existed for decades. As one Reddit commenter pointed out, "aren’t there existing word search generators that actually produce a proper word search? Instead, they used AI with no solutions. Pathetic." Another commenter recounted programming a fully functional word search generator over 40 years ago, long before the advent of modern generative AI, underscoring that the technology to create solvable puzzles has been mature for a significant period.

Traditional word search generators operate on deterministic algorithms. They take a list of words, strategically place them within a grid, and then fill the remaining empty spaces with random letters, ensuring that all listed words are present and solvable. This rule-based approach guarantees functionality. Modern generative AI, on the other hand, particularly large language models (LLMs) and image generation models, operates on a probabilistic framework. They predict the next most likely sequence of tokens (text or pixels) based on vast training data, rather than strictly adhering to logical constraints unless explicitly programmed to do so with sophisticated prompt engineering and validation layers.

This fundamental difference explains why an AI might produce a visually plausible word search grid and a list of words, but fail to ensure the words are actually embedded. It "looks" like a word search but lacks the underlying functional integrity. The sarcastic observation by u/Life-Celebration-747, "And they want AI to take over the military," humorously, yet pointedly, highlights the potential catastrophic consequences of deploying AI in critical systems without rigorous validation and a deep understanding of its limitations.

The Critical Gap: Human Oversight and Internal Error-Checking

The "broken word search" thread exemplified one of the most concerning themes frequently discussed by AI critics: the technology’s inherent incapacity for internal error-checking. AI, in its current iteration, "doesn’t know what it doesn’t know." It can generate content that appears coherent or correct on the surface, but it lacks the meta-cognitive ability to evaluate its own output for logical consistency, factual accuracy, or adherence to functional requirements.

This limitation places an immense responsibility on human operators. When content generated by AI is published without a crucial "human in the loop" review process, the consequences can range from minor embarrassments, like an unsolvable word search, to much more severe issues. In journalism, this could lead to the publication of fabricated facts or misleading narratives. In educational materials, it could result in incorrect information being disseminated. In critical fields, such as medical diagnostics or legal advice, unchecked AI output could have dire real-world implications.

The incident serves as a potent reminder that while AI can be an incredibly powerful tool for augmentation and acceleration, it is not a substitute for human discernment, critical thinking, and quality control. The process of content creation, particularly for public consumption, requires a multi-layered approach where AI’s efficiency is balanced by human expertise to verify, refine, and validate the output. Publishers who neglect this vital step, driven perhaps by cost-cutting measures or an overestimation of AI’s current capabilities, risk not only their reputation but also the trust of their audience.

Broader Implications for Content Integrity and Public Trust

The case of the impossible word search transcends a mere online curiosity; it touches upon profound implications for content integrity in the digital age. As AI-generated content becomes increasingly ubiquitous, the ability of consumers to distinguish between human-crafted and machine-crafted material, and more importantly, to trust its veracity and quality, becomes a critical challenge.

The ease with which such a flawed piece of content can enter the public domain, particularly one aimed at children, raises questions about the editorial standards in a rapidly evolving publishing environment. If a simple word search can bypass quality checks, what about more complex and impactful content like news articles, educational textbooks, or policy documents?

This incident reinforces the need for greater transparency regarding the use of AI in content creation. While outright labeling of AI-generated content is a subject of ongoing debate, the underlying principle of ensuring quality and accuracy, regardless of the creation method, must remain paramount. The potential for AI to undermine quality in creative and informational fields is a significant concern for professionals who value craftsmanship and factual rigor.

Economic Drivers and Ethical Considerations

The economic pressures driving the adoption of AI in content creation are undeniable. In a competitive digital marketplace, the ability to produce more content faster and cheaper can be a significant advantage. Publishers, both large and small, are constantly seeking ways to optimize their operations and maximize output. AI offers a compelling solution to these economic challenges.

However, this pursuit of efficiency must be balanced with ethical considerations. The primary ethical concern highlighted by the word search saga is the responsibility of publishers to their audience. Publishing demonstrably flawed content, whether through negligence or deliberate corner-cutting, erodes trust. For children’s content, this concern is amplified, as young audiences are particularly vulnerable to poorly executed or misleading materials.

Furthermore, the incident contributes to the ongoing debate about the value of human labor in creative industries. While AI can automate certain tasks, the unique human capacities for creativity, empathy, critical judgment, and error correction remain indispensable. The risk of devaluing human input in favor of purely automated processes is not just an economic one but also an ethical one concerning the preservation of quality and integrity in content production.

Looking Ahead: The Future of AI in Content Creation

The viral Reddit word search serves as a powerful, if somewhat humorous, cautionary tale. It underscores that while AI is a transformative technology with immense potential, its deployment requires careful consideration, robust validation processes, and an unwavering commitment to human oversight.

The future of AI in content creation will likely involve increasingly sophisticated "human-in-the-loop" systems, where AI handles the heavy lifting of generation, but human editors, fact-checkers, and quality assurance specialists provide the essential layers of review and refinement. This collaborative model, rather than full automation, appears to be the most responsible path forward for leveraging AI’s strengths while mitigating its weaknesses.

As AI models continue to evolve, they may develop more advanced capabilities for self-correction and logical reasoning. However, until such a time, the responsibility for ensuring the quality, accuracy, and functionality of AI-generated content rests firmly with the human agents who choose to deploy these powerful tools. The "impossible" word search serves as a clear and compelling reminder that in the rush to embrace technological advancement, the fundamental principles of quality and reliability must never be overlooked. The internet may be chaotic, but the expectation for trustworthy and functional content remains.