The rapid integration of artificial intelligence into the software development lifecycle has moved beyond simple code generation, fundamentally altering how technical professionals acquire new skills and solve complex problems. According to a comprehensive February survey conducted by Stack Overflow in partnership with OpenAI, the landscape of technical education is undergoing a period of intense consolidation and transformation. While 64% of developers now report using AI as a primary learning tool—a significant increase from 44% in the 2025 Developer Survey and 37% in 2024—this shift is accompanied by a growing "trust gap" and concerns over the long-term impact on cognitive development. The data, gathered from nearly 900 respondents, suggests that while AI offers unprecedented efficiency, it introduces a new "AI tax" on the learning process, characterized by a lack of provenance and an increased need for human-led validation.

The Chronology of Disruption: From Pandemic Shifts to AI Dominance

The current state of developer learning is the result of a multi-year trajectory that began with the forced digitalization of education during the COVID-19 pandemic. Before 2020, traditional face-to-face instruction was the gold standard for technical education. A longitudinal study of students at a Chinese university highlighted this transition, revealing that 65% of students viewed in-person courses as more effective prior to 2020. Despite the proliferation of sophisticated online tools, this preference remained remarkably stable, dipping only slightly to 63% by 2023.

As pandemic restrictions eased, the emergence of Large Language Models (LLMs) provided a new alternative to both traditional classrooms and standard online resources. The timeline of this adoption is stark: in early 2024, developer reliance on AI for learning was significant but not yet the majority preference. By the time the 2025 annual survey was conducted, nearly half of the global developer community had integrated AI into their learning workflows. The most recent pulse survey from February shows that this trend has accelerated even further, with 64% of developers now utilizing AI to bridge knowledge gaps. This rapid adoption is driven by two primary factors: the ability to "start from scratch" on a new project (cited by 28.2% of users) and overall efficiency (26.3%).

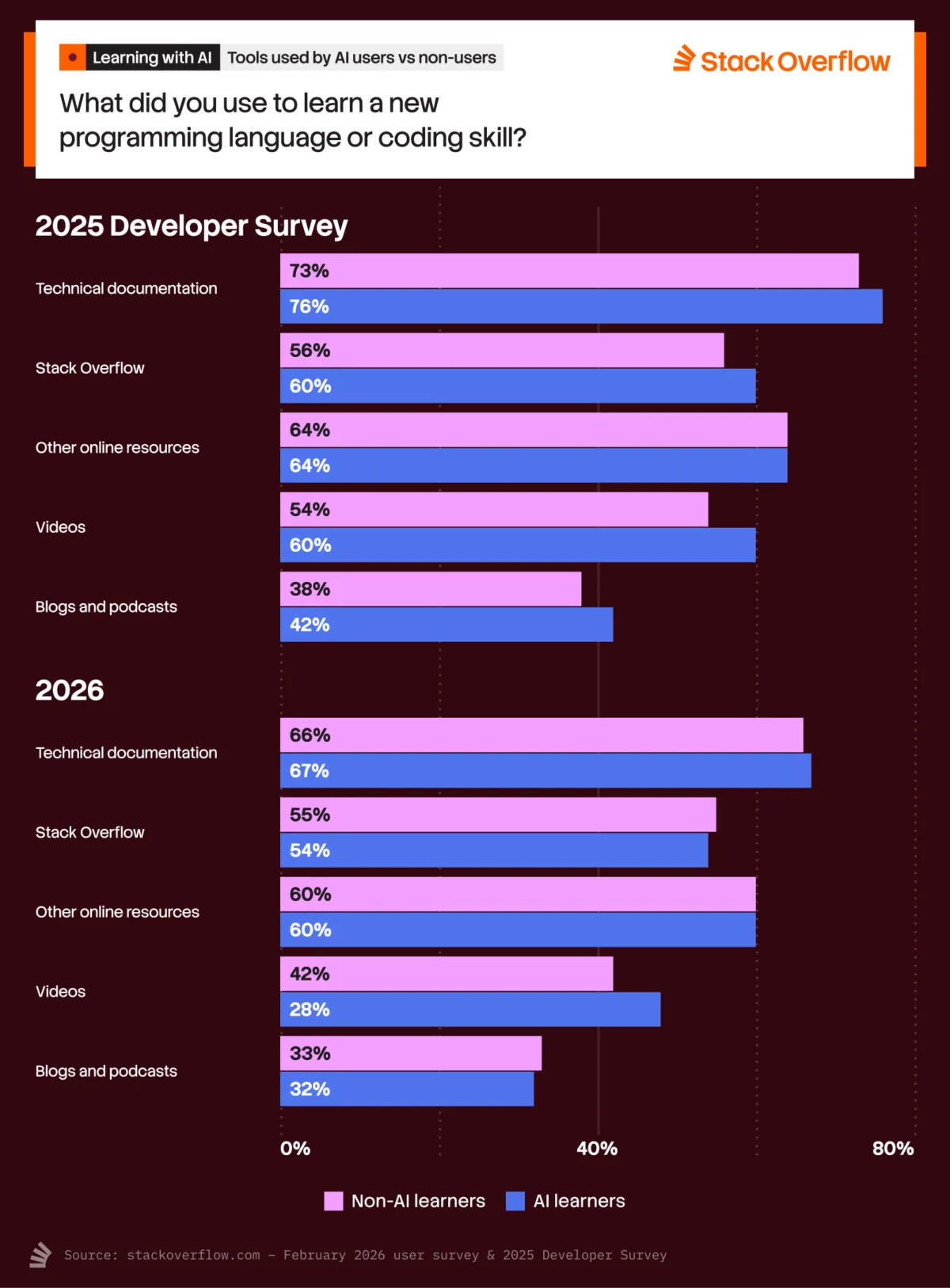

The Consolidation of Learning Resources

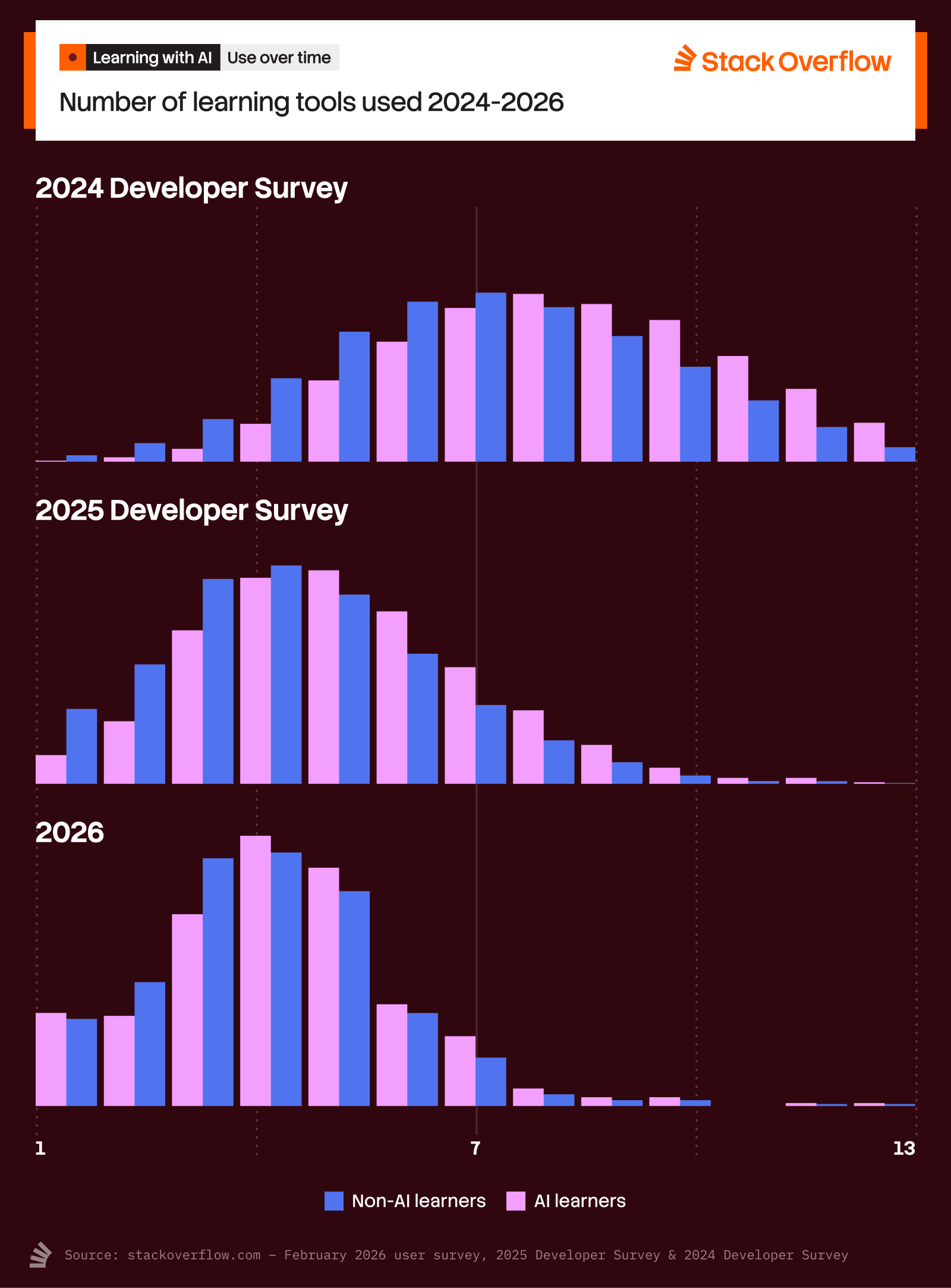

One of the most striking findings in the recent data is the radical consolidation of the developer’s toolkit. Historically, learning to code was a fragmented process involving a wide array of resources, including textbooks, video tutorials, community forums, documentation, and peer mentorship. In 2024, approximately 49% of developers reported using eight or more distinct resources to learn their craft. By early 2025, that figure plummeted to 9%, and the most recent pulse survey indicates it has dropped further to just 7%.

This "less is more" trend is driven largely by younger developers (ages 18–34), who are increasingly turning to AI as a centralized hub for information. However, this consolidation is not a total replacement of traditional methods. Instead, it represents a shift toward a "validation-based" workflow. While developers are using fewer tools overall, they are using AI in conjunction with established sources to ensure accuracy. Current data shows that 58% of respondents use both AI and technical documentation, 54% use AI alongside search engines and forums, and 50% continue to utilize Stack Overflow to verify the outputs generated by AI.

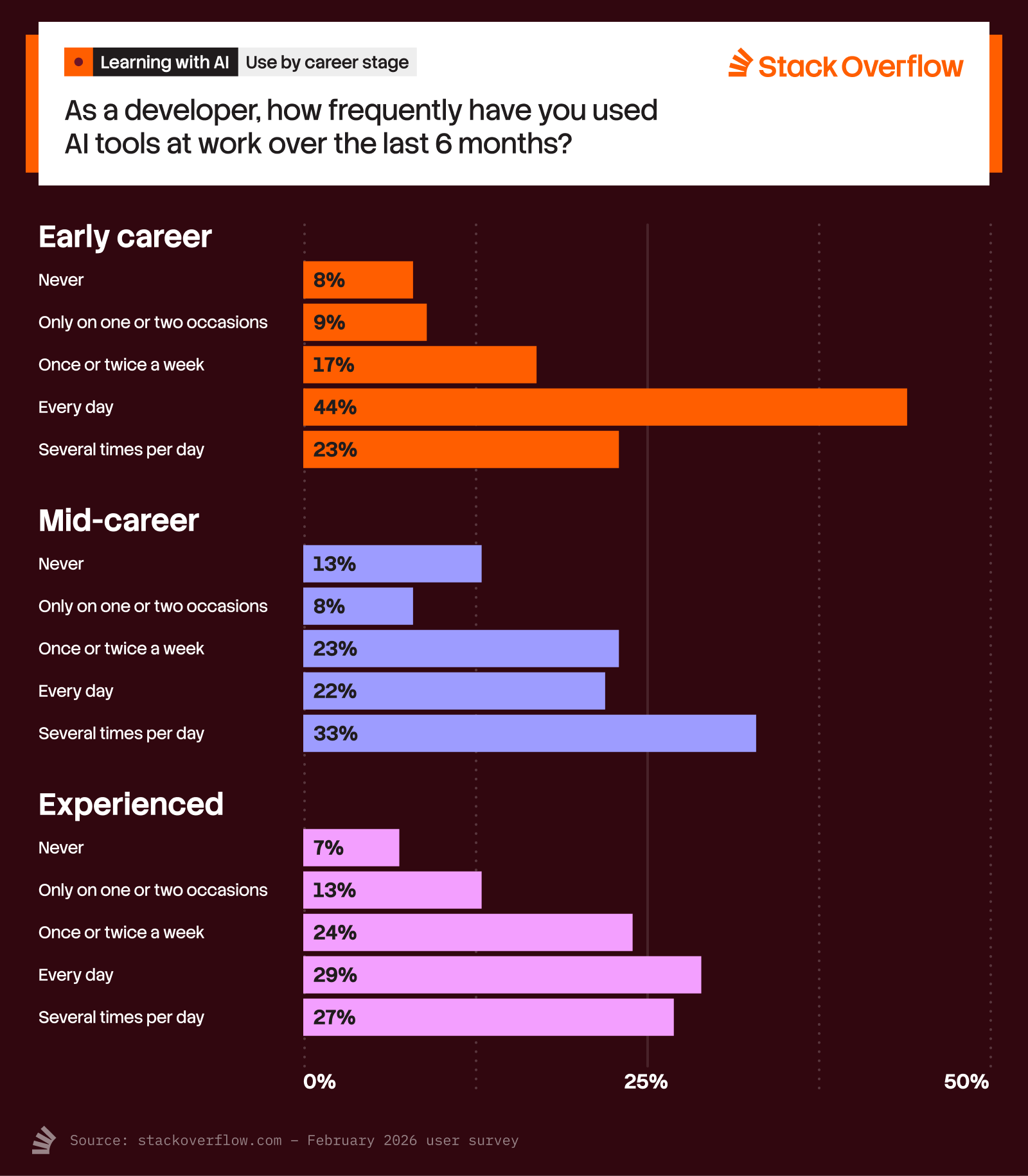

Career Experience and AI Reliance

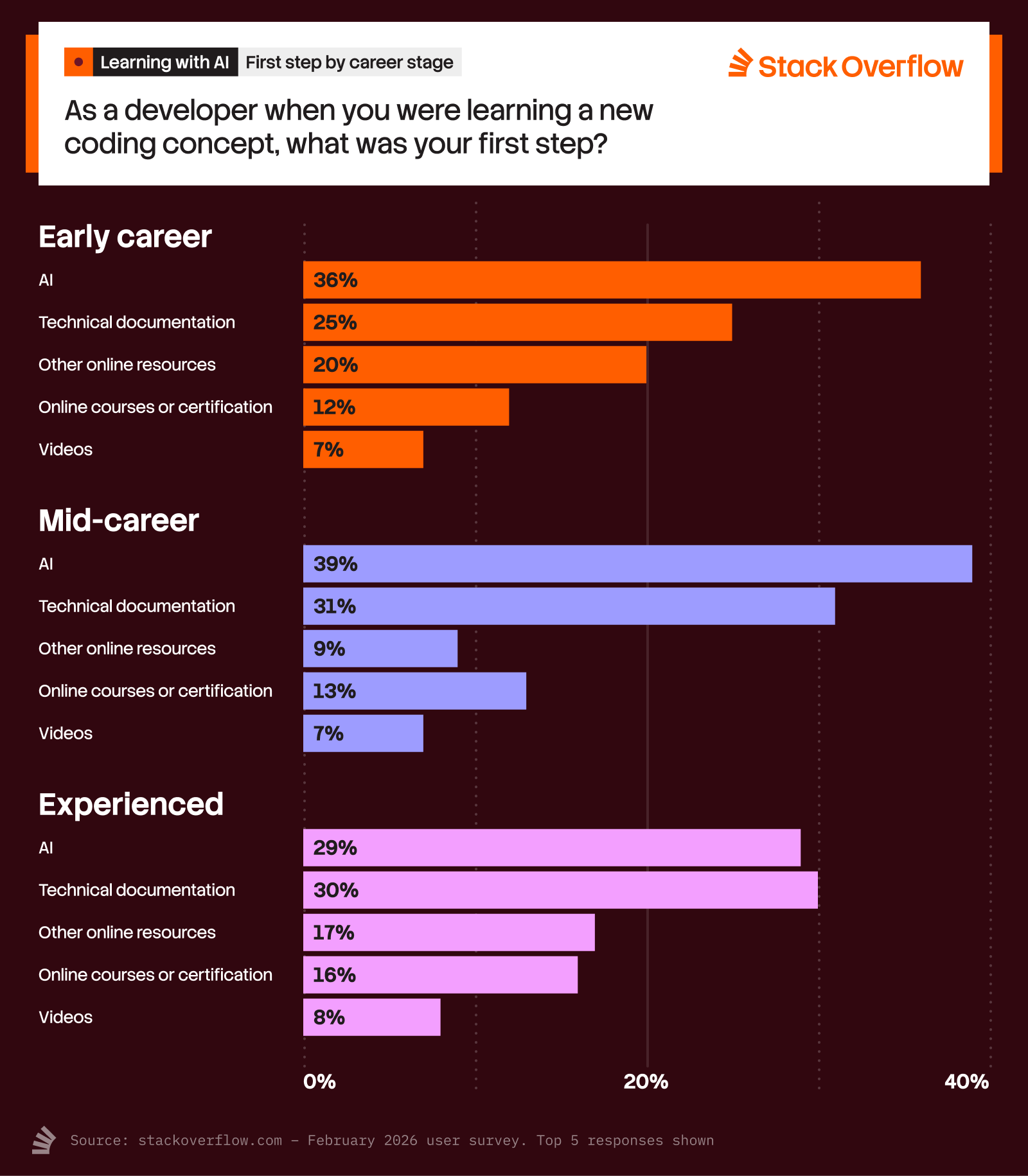

The data reveals a clear divide in how developers at different career stages interact with AI. Early-career and mid-career professionals are the most likely to treat AI as their first point of contact for a problem. Specifically, 36% of early-career developers and 39% of mid-career developers turn to AI first when they encounter a learning obstacle. In contrast, experienced developers maintain a more balanced approach, showing a slight preference for technical documentation (30%) over AI tools (29%).

This discrepancy is often tied to the "time barrier." For developers who do not use AI to learn, 35% cite a lack of time as their primary obstacle, far outweighing low motivation (11%) or uncertainty about where to start (10%). For those who have adopted AI, the time barrier drops to a mere 7%, suggesting that AI is successfully addressing the "just-in-time" learning needs of busy professionals. However, this speed comes at a cost that more experienced developers are often more sensitive to: the loss of technical depth and the risk of "cognitive offloading."

The Trust Gap and the "AI Tax"

Despite its efficiency, AI continues to suffer from a significant trust deficit. Among developers who hesitate to use AI for learning, 38% cite a lack of trust in the results as their primary barrier. This distrust is more pronounced among those who use AI less frequently; 47% of weekly users express skepticism, compared to 38% of daily users.

Information architect Jessica Talisman describes this phenomenon as a breakdown in the "documentary chain." LLMs are designed to mimic the appearance of authoritative knowledge, often generating citations and footnotes that appear legitimate but lack true provenance. In traditional data systems and archival records, meta-properties and relationship trails establish authority. AI, by obscuring these citations and the background of its answers, imposes an "AI tax" on the learner. The student or developer must spend additional cognitive effort—and time—verifying the information against trusted sources like Stack Overflow or official documentation to ensure the "knowledge" they are acquiring is not a hallucination.

This concern mirrors broader societal anxieties. A recent Brookings poll found that students, parents, and teachers all identified "undermining cognitive development" as the number one risk of using AI in educational settings. The fear is that by relying too heavily on AI to synthesize information, learners may lose the ability to engage in critical thinking and independent problem-solving.

The Human Element in a Machine-Driven World

The research suggests that for AI to be truly effective in a learning environment, some degree of human intervention remains essential. The study of Chinese university students found that "humorous teachers" significantly improved learning and memorization outcomes for 62% of students. This underscores the importance of engagement and personality—elements that current AI models struggle to replicate.

Similarly, the debate over remote versus in-office work highlights the value of human interaction. Surveys by Owl Labs across the U.S. and Europe indicate that hybrid and in-office environments are still favored for their ability to maintain leadership visibility, reinforce team cohesion, and improve collaboration. For developers, the "office" or the "community forum" acts as a social learning environment where nuance and context are provided by peers. If AI is to continue its ascent as a learning tool, developers indicate it must become more transparent and human-centric.

The Future of AI in Professional Development

As AI moves from a learning aid to a professional representative, developer skepticism increases. While 57% of developers agree that AI has improved as a learning tool, only 44% believe that a certification for skills learned via AI platforms would be valuable. The prospect of agentic AI—autonomous agents representing developers in job searches—is met with even greater caution.

When asked about the conditions under which they would use an AI-powered job platform, the responses were telling:

- 46.2% demanded human intervention at every step.

- 42.2% required a transparent data usage policy.

- 40.2% wanted recommendations for lateral job positions they hadn’t considered.

- 40.0% cited low costs or free access as a prerequisite.

- 28.5% stated they would not use such a platform regardless of its features.

These figures illustrate that while developers are comfortable using AI for "starting from scratch" on code, they are far less willing to cede control over their career trajectories or professional credentials to an automated system.

Implications for the Tech Industry

The findings from Stack Overflow and its partners suggest that we are entering a "verification era" of technical learning. The role of the developer is shifting from a "generator" of code to an "editor" and "validator" of AI-generated content. This has profound implications for how companies train their staff and how educational institutions design their curricula.

First, the "AI tax" means that the time saved in initial generation is often partially lost in the verification phase. Organizations must account for this in their productivity metrics. Second, the decline in the number of tools used by developers suggests that a few major platforms will hold immense power over the "truth" in technical education, making the accuracy and transparency of those models a matter of industry-wide importance.

Ultimately, while AI has become the first source for answers for a new generation of developers, it has not yet become the final authority. The continued reliance on human-curated documentation and community-driven platforms like Stack Overflow suggests that the "human in the loop" is not just a safety measure, but a fundamental requirement for the integrity of technical knowledge. As AI tools continue to evolve, the challenge for the industry will be to reduce the "AI tax" by improving provenance and building tools that enhance, rather than replace, the human capacity for critical learning.