Washington D.C. – In a bold legislative move that could significantly reshape the future of artificial intelligence infrastructure in the United States, Senator Bernie Sanders (I-VT) and Representative Alexandria Ocasio-Cortez (D-NY) today introduced companion legislation aimed at imposing an immediate moratorium on the construction of any new data centers exceeding a peak power load of 20 megawatts. This proposed ban, presented simultaneously in both chambers of Congress, seeks to halt the rapid expansion of facilities powering the burgeoning AI industry until comprehensive federal regulations for artificial intelligence are firmly established. The initiative comes amidst growing public apprehension regarding AI’s societal impact and increasing scrutiny over the immense energy and environmental footprint of the data centers that serve as its operational backbone.

The Escalating Demand for AI Infrastructure and Its Environmental Toll

The United States has witnessed an unprecedented explosion in data center construction over the past decade, a trend dramatically accelerated by the advent and rapid deployment of sophisticated artificial intelligence models. These facilities, the physical embodiment of the digital age, are the computational engines that train and run everything from advanced language models to complex scientific simulations. However, their proliferation comes at a significant cost, primarily in terms of energy consumption and environmental impact.

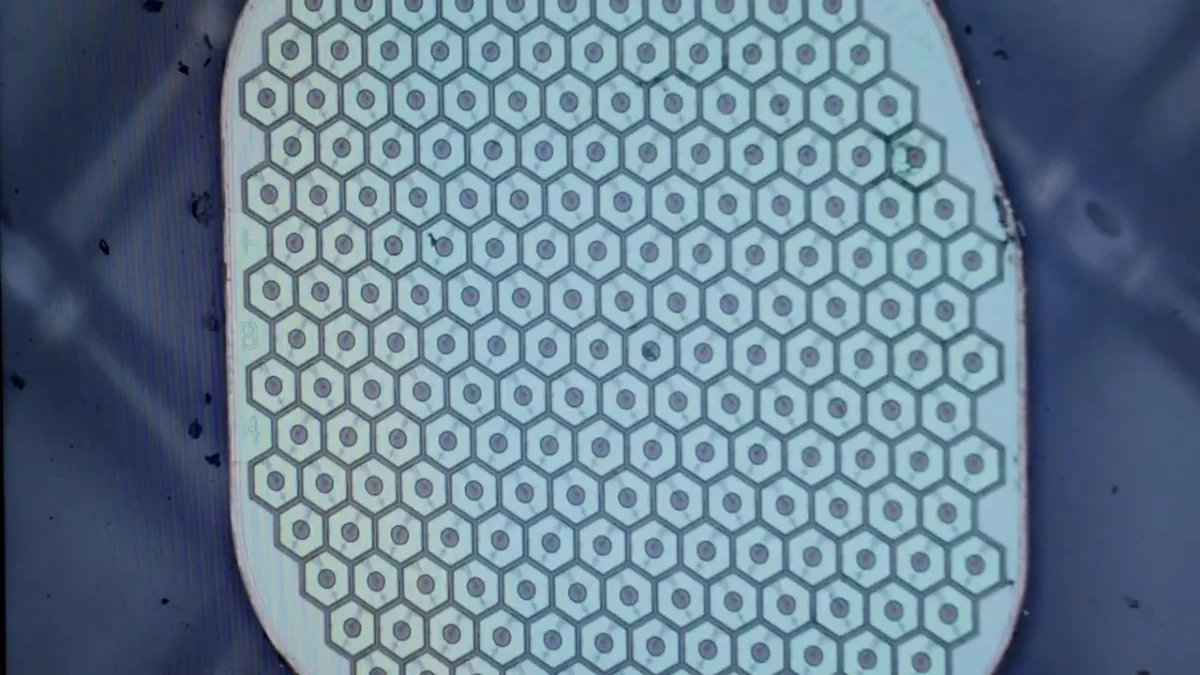

AI workloads, particularly the training of large foundational models, are extraordinarily compute-intensive and, by extension, energy-intensive. A single training run for a cutting-edge AI model can consume energy equivalent to that of several households for a year, generating tons of carbon emissions in the process. This demand has spurred a construction boom, transforming rural landscapes and suburban areas into digital industrial parks. Major tech companies are pouring billions into new facilities, with some regions, like Northern Virginia, becoming global epicenters for data center development. According to industry estimates, data centers currently account for approximately 1-2% of global electricity consumption, a figure projected to rise substantially as AI adoption accelerates. Some forecasts suggest this could reach 4% or even more by 2030, putting immense strain on existing electrical grids and renewable energy targets.

Beyond electricity, data centers are also prodigious consumers of water, primarily for cooling their vast arrays of servers. While modern cooling technologies have improved efficiency, large facilities can still consume millions of gallons of water annually, raising concerns in drought-prone regions and exacerbating local water stress. The proposed 20-megawatt threshold for the ban is significant; facilities of this size are considered large-scale operations, capable of housing tens of thousands of servers and drawing power comparable to a small town. The cumulative effect of numerous such centers, often clustered together for optimal connectivity and infrastructure, presents a formidable environmental challenge.

A Progressive Push for Regulatory Control

Senator Sanders and Representative Ocasio-Cortez, both prominent figures in the progressive wing of their respective parties, have long advocated for robust governmental oversight in areas ranging from climate change to labor rights. Their joint legislative effort reflects a deep-seated concern that the rapid, unregulated expansion of AI and its supporting infrastructure risks exacerbating existing societal inequalities, eroding job security, and undermining environmental sustainability goals.

The core of their argument is that AI development has outpaced regulatory frameworks, creating a vacuum that could lead to unintended and potentially catastrophic consequences. "AI is far more dangerous than nukes. So why do we have no regulatory oversight?" remarked Elon Musk, a sentiment echoed by Sanders’ office in their official statement, highlighting a shared concern among some of the tech industry’s most influential figures. The proposed moratorium is designed as a pause button, compelling Congress to address these complex issues before further irreversible commitments are made to infrastructure and technological pathways.

The companion legislation outlines a comprehensive vision for AI regulation, extending far beyond merely limiting data center growth. Key provisions include:

- Government Review and Certification of AI Models: Requiring federal agencies to vet and certify advanced AI models before their public release, assessing their safety, bias, and potential societal impacts. This aims to establish a preemptive oversight mechanism, moving away from a reactive approach to AI harms.

- Protections Against AI-Driven Job Displacement: Mandating measures to mitigate the economic disruption caused by AI automation, potentially through retraining programs, universal basic income initiatives, or taxation on automated labor. This reflects a long-standing concern of both legislators regarding the future of work.

- Limiting the Environmental Impact of Data Infrastructure: Beyond the immediate moratorium, the bill calls for long-term policies to reduce the carbon footprint and water usage of data centers, potentially through mandates for renewable energy sourcing, efficiency standards, and responsible siting.

- Requirement for Union Labor in Construction: Insisting that data center construction projects utilize unionized workforces, a move aimed at ensuring fair wages, benefits, and working conditions in a rapidly growing sector. This aligns with the legislators’ broader pro-labor agenda.

- Prohibition of Advanced Chip Exports to Non-Compliant Countries: Seeking to prevent the export of critical AI hardware to nations that do not adhere to similar stringent AI regulatory and ethical standards. This provision underscores a geopolitical dimension, aiming to establish global norms for responsible AI development while safeguarding national interests.

These multifaceted demands underscore a belief that AI’s development must be inextricably linked to public welfare, environmental stewardship, and democratic accountability, rather than solely driven by corporate interests and technological acceleration.

Echoes of Concern from Silicon Valley and the Public

The legislators’ initiative is bolstered by a growing chorus of warnings from within the very industry that stands to be most affected. The aforementioned statement from Elon Musk, CEO of Tesla and SpaceX, who has repeatedly articulated dire warnings about uncontrolled AI, serves as a powerful endorsement. Similar anxieties have been voiced by other leading figures:

- Demis Hassabis (CEO, Google DeepMind): Has emphasized the need for careful development and robust safety mechanisms, likening AI to a "new form of intelligence" that requires profound ethical consideration.

- Dario Amodei (CEO, Anthropic): A proponent of "responsible scaling" for AI, Amodei has highlighted the existential risks posed by increasingly powerful AI systems and called for greater transparency and oversight.

- Sam Altman (CEO, OpenAI): While a leading advocate for AI’s potential, Altman has also testified before Congress, urging lawmakers to consider regulation to mitigate risks such as misinformation, job displacement, and the potential for AI to become "superintelligent."

- Geoffrey Hinton (Nobel Prize-winner and "Godfather of AI"): Famously left Google to speak more freely about the dangers of AI, warning about its potential for misuse, job loss, and even existential threats if not properly controlled.

These statements from individuals at the forefront of AI development lend significant weight to the argument for regulatory intervention, demonstrating that concerns are not limited to outside critics but are deeply felt within the tech community itself.

Public sentiment also appears to align with the call for caution. A March 2026 Pew Research poll revealed that a significant majority of Americans are more concerned than excited about artificial intelligence. The poll indicated that only 10% of those surveyed expressed more excitement than concern regarding AI’s rise, while nearly 60% voiced greater apprehension. The concerns cited included potential job losses, the spread of misinformation, privacy violations, and the ethical implications of autonomous decision-making by AI systems. This broad public skepticism provides a critical mandate for lawmakers seeking to implement stricter controls. The findings suggest that the public is increasingly wary of unchecked technological advancement, particularly when its impacts are perceived as potentially disruptive or harmful to society.

The Battle Ahead: Lobbying, Geopolitics, and Economic Interests

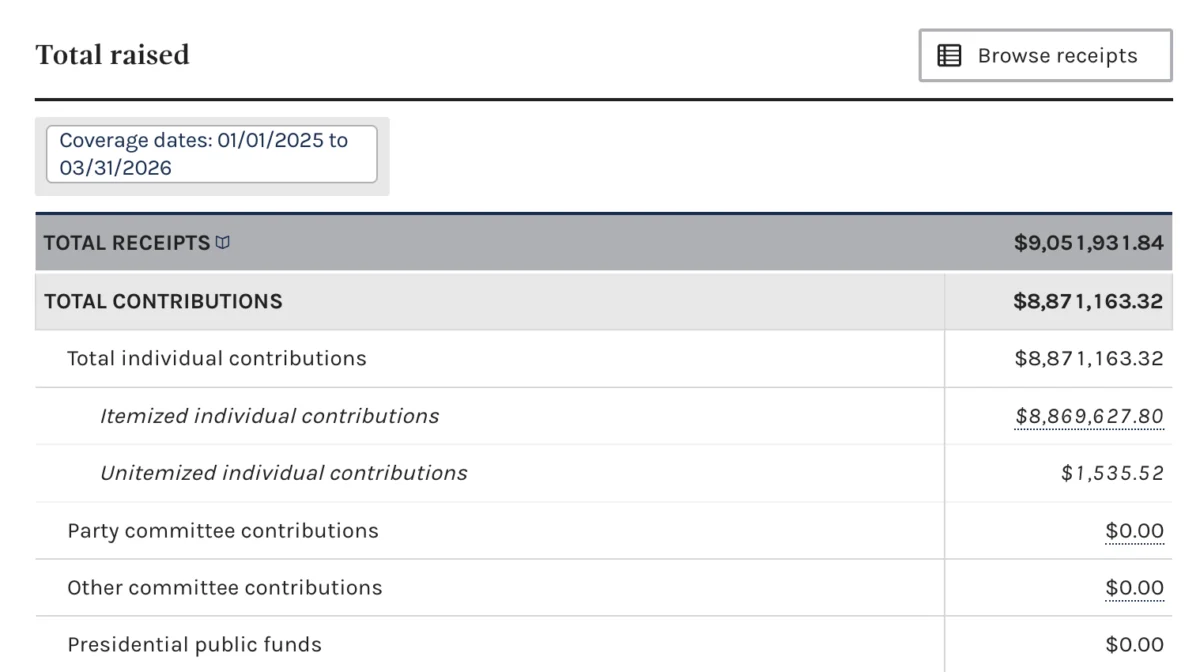

Despite the public and some industry support, the proposed legislation faces a formidable uphill battle in Congress. The AI industry, a rapidly expanding sector with immense economic potential, has ramped up its lobbying efforts significantly in recent years. Tech giants and AI startups alike have invested massive amounts in political spending, seeking to influence policy decisions and prevent what they perceive as stifling regulations. These lobbying efforts often highlight the economic benefits of AI, including job creation, increased productivity, and the potential for groundbreaking scientific and medical advancements.

A primary counter-argument to regulatory pauses or bans is the intense geopolitical competition surrounding AI. The narrative of an "AI arms race" with China is a powerful one in Washington, often invoked to argue against any measures that might slow down American innovation. Proponents of rapid, unfettered AI development contend that strict regulations in the U.S. could cede technological leadership to rival nations, potentially compromising national security and economic dominance. The fear is that if the U.S. imposes a moratorium, other countries will continue their development unchecked, leaving the U.S. at a strategic disadvantage. This concern is particularly potent in the current political climate, where technological leadership is increasingly seen as synonymous with global power.

Furthermore, the economic implications of a data center ban are not trivial. While the bill aims to address environmental and social concerns, it could also impact job creation in construction, engineering, and operations. States and localities often compete fiercely to attract data centers, enticed by the promise of investment, tax revenue, and high-paying tech jobs, even if the direct employment numbers are often lower than initially projected. Balancing these economic incentives against the environmental and regulatory concerns will be a central challenge for lawmakers.

The legislative process itself is lengthy and complex. As companion bills, the Sanders and Ocasio-Cortez proposals would need to pass in both the Senate and the House of Representatives, navigating committee reviews, amendments, and floor votes. Given the deeply divided nature of Congress and the powerful interests involved, such a comprehensive and restrictive piece of legislation faces significant hurdles to becoming law. This bill might be seen, therefore, as an opening bid, a strong statement designed to initiate a broader debate on AI governance rather than a sure path to immediate enactment.

Potential Ramifications: A Crossroads for AI Development

Should such a ban or stringent regulatory framework be enacted, the ramifications for the AI industry, energy infrastructure, and the broader global technological landscape would be profound.

For the AI industry in the United States, a moratorium on large data centers could force a significant recalibration. Companies might be compelled to optimize existing infrastructure more efficiently, innovate in less energy-intensive AI architectures, or even explore moving parts of their operations to countries with fewer restrictions. This could either spur a new wave of sustainable AI innovation or potentially push some cutting-edge development overseas, creating a regulatory arbitrage.

The impact on energy policy would also be substantial. A pause in data center expansion would alleviate some pressure on stressed electrical grids, particularly in regions experiencing rapid growth. It could also accelerate the push for truly green energy solutions for existing facilities, as companies seek to mitigate their environmental footprint in a more scrutinized environment. Utilities, which have been scrambling to keep up with surging demand from data centers, would have an opportunity to reassess and plan for a more sustainable energy future.

Globally, the U.S. taking a leading role in regulating AI infrastructure could set a precedent. While the European Union is ahead with its comprehensive AI Act, the U.S. approach, particularly regarding infrastructure and environmental impact, could influence other nations considering similar measures. This could foster a global movement towards more responsible and sustainable AI development, or conversely, create a patchwork of regulations that complicate international tech operations.

The introduction of this legislation marks a critical juncture in the ongoing debate about the future of artificial intelligence. It represents a clear challenge to the prevailing narrative of unbridled technological progress, demanding that innovation be tempered with foresight, ethical consideration, and a commitment to planetary health and social equity. Whether this bold proposal ultimately succeeds or not, it has undeniably elevated the discourse on AI’s true costs and the urgent need for a regulatory framework commensurate with its transformative power. The coming months will reveal the extent of political will to confront these complex issues and steer the course of AI development toward a more sustainable and equitable future.