In a significant move signaling the increasing integration of advanced artificial intelligence into critical financial infrastructure, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell recently convened a high-stakes meeting with top executives from the nation’s largest banks. The primary directive from this influential gathering was a strong encouragement for these financial institutions to deploy Anthropic’s newly unveiled Mythos AI model as a crucial tool for detecting vulnerabilities within their complex systems. This endorsement, first reported by Bloomberg, highlights a pivotal moment where government bodies are actively steering the private sector towards specific technological solutions to fortify national economic security.

While JPMorgan Chase had previously been identified as an initial partner organization granted early access to the sophisticated model, the recent high-level engagement has expanded the scope dramatically. Reports indicate that other financial giants, including Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley, are now actively engaged in testing Mythos, underscoring a rapid and broad adoption interest across Wall Street. This widespread exploration by leading financial entities suggests a collective recognition of the escalating cybersecurity threats and the potential of advanced AI to provide a robust defense.

The Dawn of Mythos: Capabilities and Controversy

Anthropic formally announced the Mythos model earlier this week, generating considerable buzz within the tech and cybersecurity communities. The company initially indicated that access to Mythos would be severely restricted, a decision it attributed, in part, to the model’s unexpected and exceptional proficiency in uncovering security vulnerabilities, despite not being explicitly trained for cybersecurity applications. This claim of the AI being "too good" sparked a dual reaction: some viewed it as a legitimate concern for responsible deployment, while others, including prominent tech commentators like Ed Zitron, suggested it might be a calculated marketing strategy to generate hype or a shrewd enterprise sales tactic designed to create exclusivity and demand.

Mythos, a large language model (LLM) developed by Anthropic, is built upon the company’s "Constitutional AI" principles, aiming for safer and more aligned AI systems through a set of guiding rules. While its primary architectural design focused on general reasoning and complex problem-solving, its emergent capability to identify sophisticated security flaws has positioned it as a potential game-changer in a field desperately seeking innovation. The model’s ability to sift through vast datasets of code, network configurations, and system logs to pinpoint anomalies and potential exploits offers a scale and speed that human analysts struggle to match. This capacity is particularly appealing to financial institutions, which operate some of the most intricate and targeted digital infrastructures globally.

A Paradoxical Stance: Government’s Dual Approach to Anthropic

The federal government’s dual approach to Anthropic presents a fascinating and somewhat contradictory narrative. On one hand, top financial regulators are actively advocating for the use of Mythos within the critical banking sector, underscoring its perceived value in national security. On the other hand, Anthropic is currently embroiled in a legal battle with the Trump administration, specifically the Department of Defense (DoD), over its designation as a "supply-chain risk." This contentious label emerged after negotiations between Anthropic and the government collapsed, reportedly due to the company’s insistence on implementing stringent limitations on how its AI models could be utilized by federal agencies, particularly regarding military applications or surveillance.

This legal dispute, initiated by Anthropic in March 2026, challenges the DoD’s classification, which carries significant implications for the company’s ability to secure government contracts and collaborate on sensitive projects. The Pentagon’s official designation in early March 2026, citing concerns over potential vulnerabilities or dependencies in Anthropic’s supply chain, stands in stark contrast to the Treasury and Federal Reserve’s active encouragement of its technology for financial stability. This divergence highlights the complex and often fragmented nature of government policy concerning emerging technologies, where different agencies may hold differing risk assessments and strategic priorities.

The Escalating Cyber Threat Landscape in Finance

The push for AI-driven cybersecurity solutions like Mythos is not arbitrary; it is a direct response to an increasingly sophisticated and relentless wave of cyber threats targeting the financial sector. According to a 2025 report by the Financial Services Information Sharing and Analysis Center (FS-ISAC), cyberattacks against financial institutions increased by 15% year-over-year, with ransomware and sophisticated phishing campaigns leading the charge. The average cost of a data breach in the financial industry exceeded $6 million in 2025, a figure that does not fully capture the reputational damage and long-term erosion of customer trust.

Traditional cybersecurity measures, while essential, are often reactive and struggle to keep pace with polymorphic malware, zero-day exploits, and state-sponsored advanced persistent threats (APTs). The sheer volume of transactions, customer data, and interconnected systems within a major bank creates an attack surface of immense proportions. AI models like Mythos promise a paradigm shift, moving from reactive defense to proactive threat hunting and predictive vulnerability identification. By analyzing patterns of behavior that are too subtle for human eyes or rule-based systems, AI can potentially detect nascent threats before they fully materialize into costly breaches.

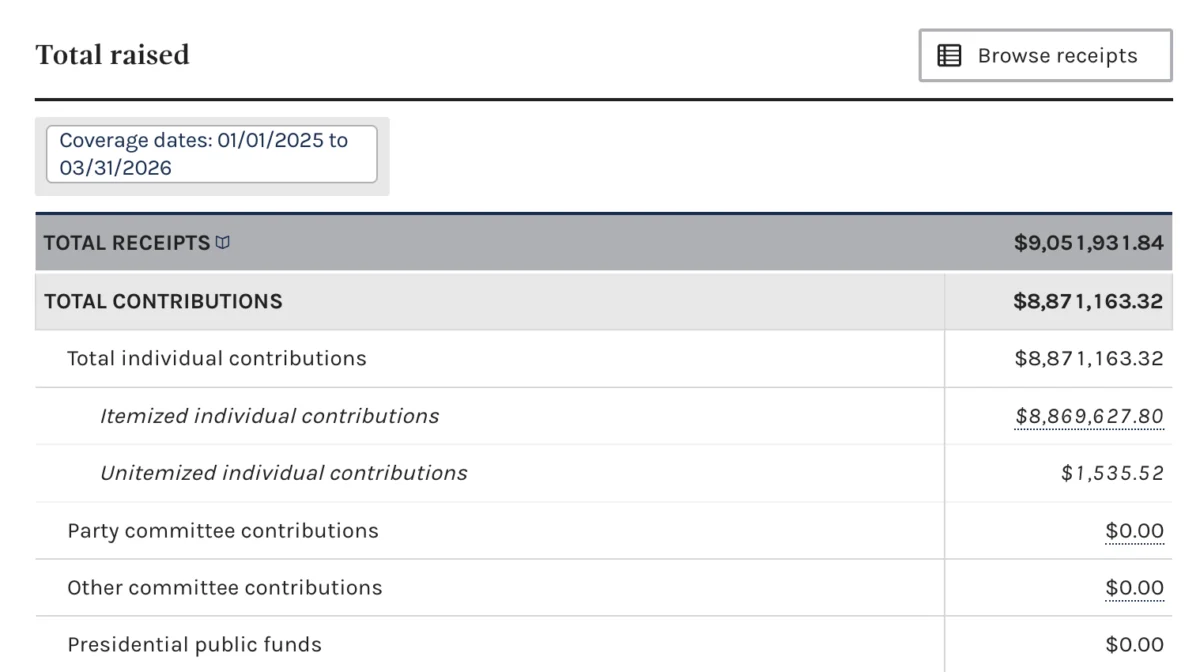

Chronology of Key Events: A Rapid Evolution

- January 2026: Anthropic intensifies development and internal testing of Mythos, observing unexpected cybersecurity capabilities.

- March 5, 2026: The Pentagon officially labels Anthropic as a "supply-chain risk" following stalled negotiations over usage limitations for government applications.

- March 9, 2026: Anthropic files a lawsuit against the Department of Defense, challenging the supply-chain risk designation, citing potential harm to its business and reputation.

- April 7, 2026: Anthropic publicly announces the Mythos AI model, highlighting its general capabilities and, notably, its "too good" performance in finding security vulnerabilities, leading to initial limited access.

- April 10, 2026: Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell hold a private meeting with leading bank executives, strongly recommending the adoption and testing of Mythos for vulnerability detection.

- April 12, 2026: News breaks via Bloomberg detailing the government’s encouragement and confirming broader testing by major financial institutions beyond initial partner JPMorgan Chase.

- Ongoing (April 2026): Financial regulators in the United Kingdom begin discussions regarding the potential risks posed by Mythos and AI in finance.

Official Responses and Inferred Reactions

While direct official statements from the Treasury and Federal Reserve regarding the Mythos meeting are typically not disclosed in detail, the leak to Bloomberg indicates a deliberate effort to signal their endorsement. An inferred statement from a Treasury official might emphasize the government’s "unwavering commitment to maintaining the stability and security of the U.S. financial system, leveraging all available technological advancements to counter evolving threats." A Federal Reserve spokesperson might add that "proactive risk identification, particularly through advanced analytical tools, is paramount for safeguarding consumer assets and market integrity."

From Anthropic’s perspective, this high-level endorsement by financial regulators provides a significant validation of Mythos’s capabilities, even amidst the ongoing legal battle with the DoD. An Anthropic spokesperson, while unable to comment directly on private meetings, would likely reiterate the company’s dedication to "developing safe, aligned, and highly capable AI that serves critical societal functions, including bolstering cybersecurity for essential sectors." They might also subtly allude to their commitment to responsible deployment and transparent governance as foundational to their mission.

Banking executives, while cautiously optimistic, would likely highlight the rigorous testing phases required for any new technology integrated into their systems. An executive from one of the testing banks might state, "We are continuously evaluating cutting-edge technologies to enhance our security posture. AI models like Mythos show significant promise in identifying sophisticated vulnerabilities, and we are committed to thoroughly assessing their efficacy, scalability, and compliance with stringent regulatory requirements."

Internationally, the Financial Times reported that U.K. financial regulators are also discussing the risks associated with Mythos. This indicates a global awareness and a proactive approach to understanding the systemic implications of such powerful AI. An inferred statement from a U.K. Financial Conduct Authority (FCA) or Prudential Regulation Authority (PRA) representative might stress the importance of "a robust regulatory framework for AI adoption in finance, focusing on explainability, bias mitigation, operational resilience, and cross-border cooperation to manage systemic risks."

Broader Impact and Implications for the Financial Sector

The explicit endorsement of Mythos by U.S. financial regulators carries profound implications for the future of cybersecurity in the banking sector and the broader adoption of AI in finance.

Accelerated AI Adoption and Investment

This governmental push will likely accelerate the adoption of advanced AI tools across the financial industry. Banks, under regulatory pressure and facing relentless cyber threats, will increase their investment in AI research, development, and integration. This could foster a competitive landscape among AI developers to create even more sophisticated models tailored for financial security.

Reshaping Cybersecurity Strategies

Mythos and similar AI models are poised to fundamentally reshape how financial institutions approach cybersecurity. The shift will be towards more predictive, proactive, and automated threat detection and vulnerability management. Human analysts will transition from manually sifting through alerts to overseeing AI systems, validating findings, and focusing on strategic threat intelligence and response.

Regulatory Framework Evolution

The emergence of highly capable AI models like Mythos will undoubtedly compel financial regulators worldwide to develop more comprehensive and specific guidelines for AI usage. Key areas of focus will include:

- Explainability (XAI): Ensuring that the decisions and findings of AI models can be understood and audited.

- Bias and Fairness: Preventing AI from inadvertently discriminating or creating new forms of risk.

- Operational Resilience: Assessing the reliability and robustness of AI systems, especially in critical functions.

- Data Governance: Establishing clear rules for how sensitive financial data is used to train and operate AI models.

- Systemic Risk: Understanding how widespread adoption of a single powerful AI model could introduce new forms of interconnected risk.

The Vendor Lock-in Dilemma

As financial institutions become increasingly reliant on specific AI models from a handful of providers, concerns about vendor lock-in and potential single points of failure may emerge. Regulators will need to balance the benefits of cutting-edge technology with the need for diversity in solutions and robust contingency planning. The U.K. regulators’ discussions likely touch upon these systemic vulnerabilities.

Geopolitical and Competitive Landscape

The government’s mixed signals regarding Anthropic (encouragement for finance vs. supply-chain risk for defense) highlight the complex geopolitical dimensions of AI. Nations are grappling with how to foster domestic AI innovation while simultaneously mitigating national security risks associated with powerful dual-use technologies. This scenario could influence which AI companies governments choose to champion or restrict, shaping the global AI competitive landscape.

Ethical AI and Responsible Development

Anthropic’s "Constitutional AI" approach, aimed at safety and alignment, is central to its brand. The company’s legal challenge against the DoD’s usage limitations underscores its commitment to responsible AI deployment. This incident serves as a critical case study in the ongoing debate about who controls powerful AI and how ethical guidelines are enforced, particularly when national security interests are involved. The financial sector’s adoption of Mythos will be closely watched as a test case for how these ethical frameworks translate into practical, large-scale application in a highly regulated industry.

In conclusion, the U.S. financial regulators’ advocacy for Anthropic’s Mythos AI marks a significant inflection point. It signals a governmental embrace of advanced AI as an indispensable tool for national economic security, even as the broader regulatory and ethical landscape for AI remains in flux. The coming months will reveal how financial institutions integrate this powerful technology, how regulators adapt to its implications, and how the ongoing tensions between innovation, security, and responsible AI governance ultimately resolve. The saga of Mythos is not just about a new AI model; it is a microcosm of the profound challenges and opportunities presented by the AI revolution itself.