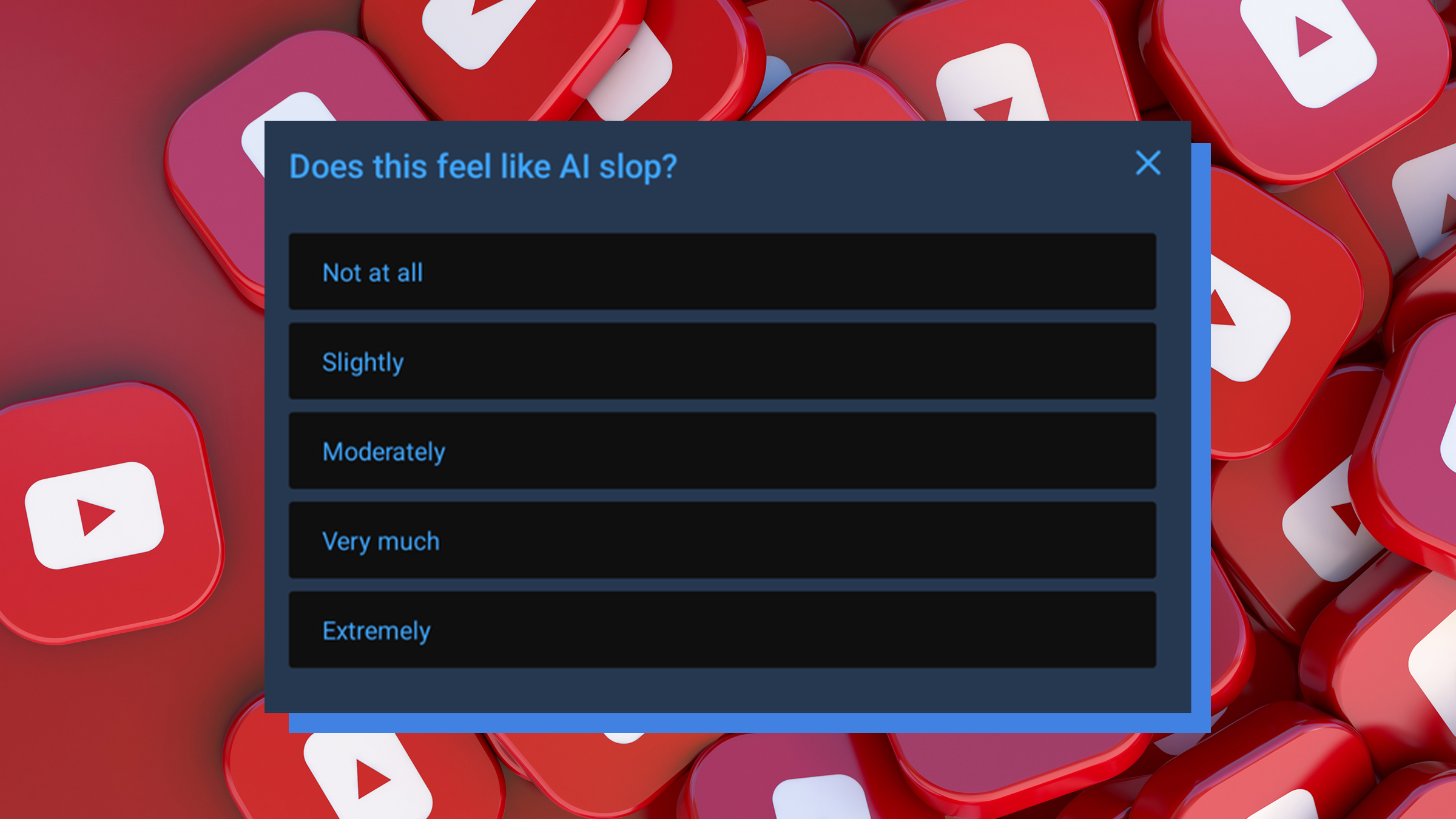

YouTube, the world’s preeminent video-sharing platform, has initiated a new pop-up survey prompting viewers to assess whether videos exhibit characteristics of "AI slop," a term referring to low-quality, often algorithmically generated content. This move, which began rolling out on March 17, has swiftly ignited widespread speculation among users and tech commentators alike, many theorizing that the company is surreptitiously leveraging its vast user base to train its own artificial intelligence models, potentially for content generation rather than merely detection. Instead of the familiar prompt asking about a video’s relevance to a search query, users are now occasionally presented with a direct question: "Does this feel like AI slop?" and asked to rate the video’s "vibes" on a scale ranging from "not at all" to "extremely" sloppy. This development arrives amidst a burgeoning concern over the proliferation of AI-generated content across digital platforms and a growing skepticism among internet users regarding how their data is utilized by major technology corporations.

The introduction of this survey marks a significant shift in YouTube’s user interaction model and highlights the platform’s increasingly complex relationship with artificial intelligence. While YouTube’s stated intention is to combat the influx of low-quality AI-generated content, the timing and nature of the survey have fueled a narrative of corporate opportunism, where billions of users are, perhaps unknowingly, contributing free labor to enhance sophisticated AI systems.

The Rising Tide of AI-Generated Content and YouTube’s Stance

The digital landscape has witnessed an exponential surge in content produced or heavily assisted by artificial intelligence, from text and images to audio and video. This proliferation has given rise to the pejorative term "AI slop," describing content that is often formulaic, unoriginal, and lacking in genuine human creativity or insight. Such content can range from hastily assembled compilation videos and automatically generated news summaries to deepfake videos and misleading product reviews. The concern is that this deluge of low-quality, algorithmically optimized material threatens to dilute the overall quality of online content, making it increasingly difficult for users to distinguish authentic, human-created works from their AI counterparts.

A report published by Kapwing in late 2025 underscored the severity of this issue, projecting that over 20 percent of all content on YouTube would be classified as "AI slop" by the close of that year. This statistic points to a significant challenge for platforms like YouTube, which thrive on user engagement and content quality. Unchecked, the spread of AI slop could degrade user experience, erode trust in the platform, and create fertile ground for misinformation and manipulative content.

YouTube’s CEO, Neal Mohan, addressed this challenge head-on in his annual letter to creators in January 2026, explicitly naming the management of "AI slop" as a key priority for the coming year. Mohan articulated a clear vision for tackling this issue, stating, "It’s becoming harder to detect what’s real and what’s AI-generated. This is particularly critical when it comes to deepfakes." He emphasized YouTube’s commitment to leveraging its existing robust systems, which have historically been successful in combating spam and clickbait, to reduce the spread of low-quality, repetitive AI content. This public declaration positioned YouTube as a proactive guardian of content quality in the age of AI.

However, Mohan’s letter also contained a seemingly contradictory message that has added fuel to the current user speculation. In the same communication, he detailed plans for the expansion of YouTube’s own large language models (LLMs) to empower users to create videos and even simple games using text prompts. This dual strategy – simultaneously vowing to combat AI slop while also enabling its creation – creates an inherent tension that has not gone unnoticed by the platform’s more discerning users. The perception is that YouTube is walking a fine line, attempting to manage the negative externalities of AI content while also embracing its potential for creative output and, implicitly, for platform growth.

The Mechanics of the "AI Slop" Survey and User Reactions

The new survey, which commenced its rollout on March 17, presents itself to viewers during video playback. A small, non-intrusive pop-up appears, typically obscuring a small portion of the video, with the direct query: "Does this feel like AI slop?" Users are then given a slider or a series of options to rate the video’s perceived AI-generated nature, from "not at all" to "extremely." This feedback mechanism replaces previous prompts that often sought user input on a video’s relevance to a search or its overall quality in a more general sense. The specificity of the term "AI slop" itself, a somewhat colloquial and derogatory descriptor, has caught the attention of many.

Almost immediately following its introduction, the survey became a topic of intense discussion across social media platforms, particularly X (formerly Twitter). Users, many of whom are already immersed in the world of AI development or are acutely aware of the tech industry’s data collection practices, quickly converged on a shared theory. The prevailing sentiment is that YouTube is not merely gathering data to improve its content moderation algorithms to suppress low-quality AI, but rather, it is using its vast audience as an unpaid workforce to train its next generation of AI models. The goal, according to this theory, is not to eliminate AI-generated content, but to refine YouTube’s own AI capabilities to produce content so sophisticated that it becomes indistinguishable from human-created material.

Prominent voices on X articulated this suspicion with sharp criticism. User @barkmeta posted, "YouTube asking ‘does this feel like AI slop’ is not them protecting you. It’s them using you to train their next AI to make slop so good you’ll never be able to tell the difference. And they got you to do it for free." This tweet encapsulates the core accusation: that users are being exploited for their cognitive labor without compensation or explicit consent regarding the end-use of their feedback.

Another user, @TukiFromKL, echoed this sentiment, claiming, "YouTube isn’t banning AI slop.. They’re making you label it so they can train their next model to not look like slop." This perspective highlights the perceived deception, suggesting that the public-facing goal of "fighting slop" masks an underlying agenda of AI development. User @birdabo further lamented the situation, stating that the new prompt "sounds good until you realize they’re literally turning 2 billion users into unpaid AI trainers. classic google." The reference to "classic Google" underscores a broader pattern of distrust directed at the tech giant and its subsidiaries.

These reactions are not merely born out of cynical speculation but are rooted in a growing "AI paranoia" that has become a palpable undercurrent in modern internet culture. The rapid advancements in AI technology, coupled with a lack of transparency from tech companies regarding their data practices, have fostered an environment where users are increasingly wary of how their digital interactions are being leveraged. The idea of being an "unpaid AI trainer" resonates deeply with many, touching upon concerns about data privacy, intellectual property, and the ethics of corporate AI development.

Historical Precedents: A Pattern of Unpaid AI Training

The current wave of skepticism surrounding YouTube’s "AI slop" survey is significantly amplified by historical precedents involving Google, YouTube’s parent company, and other tech firms. These past instances have cultivated a pervasive sense of distrust among internet users, who have repeatedly discovered that their seemingly innocuous online activities were, in fact, contributing to the training of advanced AI systems.

One of the most widely cited examples is reCAPTCHA. Introduced as a security measure to distinguish human users from bots, reCAPTCHA tasks often required users to decipher distorted text or identify objects in images. For years, users diligently completed these puzzles, believing they were simply proving their humanity. However, it was later revealed in 2018 that these efforts were simultaneously being used by Google to digitize books from archives, training its optical character recognition (OCR) AI, and to label images for autonomous vehicle training, specifically for Google Street View. Every time a user identified a street sign or a building number, they were, in essence, providing valuable labeled data to Google’s AI, entirely free of charge. Google’s ownership of both YouTube and reCAPTCHA naturally draws a direct line of connection for suspicious users.

More recently, just last weekend, news broke regarding Niantic, the developer behind the wildly popular augmented reality game Pokémon Go. It was revealed that the vast trove of photos players had taken of real-world locations through the game’s "PokéStop" submission feature – often depicting landmarks, statues, and other points of interest – were being utilized to train AI delivery robots. Players, in their pursuit of catching virtual creatures and enriching the game’s map, were inadvertently contributing to the development of commercial AI applications, again without explicit knowledge or compensation. This revelation further solidified the public perception that tech companies routinely harvest user-generated data for their AI endeavors, often without transparent disclosure.

These incidents are critical in understanding the current "AI paranoia." They illustrate a pattern where user interactions, ostensibly for one purpose (security, gaming, content consumption), are repurposed to serve the advanced data needs of AI development. This history creates a context in which YouTube’s new "AI slop" survey is not viewed in isolation but as another potential instance of a large corporation leveraging its user base for covert AI training. The argument is that while these companies benefit immensely from this "free labor," users receive no direct compensation or even clear acknowledgement of their contribution to groundbreaking AI advancements.

Implications and Broader Analysis

The implications of YouTube’s "AI slop" survey, whether its primary intent is detection or training, are multifaceted and touch upon critical aspects of data ethics, content quality, and the future of digital platforms.

The Critical Role of Data Labeling: At the heart of most advanced machine learning systems is the need for vast quantities of accurately labeled data. To train an AI to distinguish between "AI slop" and high-quality human-generated content, it requires millions of examples meticulously categorized by humans. Concepts like "quality," "originality," or "sloppiness" are inherently subjective and nuanced, making human judgment invaluable. If YouTube genuinely intends to build a robust AI model capable of detecting and filtering out low-quality AI content, then collecting human feedback on what constitutes "slop" is a logical and necessary step. This data would serve as the ground truth against which the AI can learn to make its own classifications. However, this same labeled data is equally valuable for training AI models to generate content that avoids the characteristics of "slop," making it more human-like and appealing. This dual-use potential is precisely what fuels user suspicion.

Ethical Concerns and User Consent: The central ethical dilemma revolves around informed consent and compensation. If YouTube is indeed using user feedback to train its AI models for commercial purposes (whether for moderation or generation), the question arises whether users are adequately informed about this process and if their contributions warrant compensation. Current terms of service agreements are often broad and complex, allowing companies significant leeway in how they utilize user data. However, the recurring public outcry suggests a growing demand for greater transparency and more granular control over personal data, especially when it directly contributes to the development of valuable AI assets. The concept of "unpaid labor" in the context of AI training raises fundamental questions about digital ethics in the age of pervasive data collection.

Content Moderation vs. Content Generation: A Conflicting Agenda? YouTube, as a platform, finds itself in a precarious position. On one hand, it has a clear responsibility to its users to maintain content quality and combat harmful or low-value content, including AI slop. This necessitates robust detection and moderation systems. On the other hand, YouTube, like many tech companies, is actively investing in AI content generation tools, offering them to creators as a means to foster innovation and potentially increase content volume. This inherent conflict of interest – fighting AI slop while simultaneously promoting AI content creation – is a major factor in the public’s distrust. Users perceive a potential hypocrisy: why would a company invest heavily in tools that generate the very "slop" it claims to be fighting? The answer, many believe, lies in the pursuit of more sophisticated, undetectable AI-generated content.

The Future of Content Quality and Authenticity: The long-term implications for content quality on platforms like YouTube are significant. If AI models become exceptionally adept at generating content that is indistinguishable from human-made material, the very definition of "authenticity" in digital media will be challenged. This could lead to a devaluation of human creativity, an overwhelming flood of generic content, and an increased difficulty for users to discern truth from sophisticated fabrication. The current survey, therefore, represents a critical juncture in how YouTube, and indeed the internet at large, grapples with the evolving nature of digital content.

Regulatory Landscape: Governments and regulatory bodies worldwide are increasingly grappling with the challenges posed by AI, particularly concerning data privacy, intellectual property, and transparency. Initiatives like the EU’s AI Act aim to establish comprehensive regulatory frameworks. The controversy surrounding YouTube’s survey could further underscore the need for clearer guidelines on how user-generated data is utilized for AI training, demanding greater accountability from tech platforms.

Potential Alternative Interpretations: While user theories are compelling and rooted in historical precedent, it is also important to acknowledge that YouTube could genuinely be using this data to improve its detection algorithms to filter out bad AI. The data collected could inform both suppression and improvement, as the same underlying AI technology can be used for both tasks. The difficulty of distinguishing AI from human content makes human feedback invaluable for any AI model, whether its purpose is to detect or generate. An objective analysis must consider that YouTube’s stated goal of combating AI slop is a legitimate business imperative to maintain platform quality and user trust. The challenge lies in the lack of transparent communication regarding the full scope of data utilization.

In conclusion, YouTube’s new "AI slop" survey has inadvertently opened a Pandora’s Box of questions surrounding corporate AI development, data ethics, and user trust. The reaction from the internet community, fueled by past experiences and a growing "AI paranoia," highlights a critical tension between the innovative potential of artificial intelligence and the ethical responsibilities of the companies wielding it. As AI continues to embed itself deeper into our digital lives, the debate over who trains these powerful systems, how their contributions are valued, and what the ultimate purpose of this training truly is, will only intensify, demanding greater transparency and accountability from the tech giants that shape our online world.