Anthropic, a leading artificial intelligence developer, has vehemently challenged the Pentagon’s assertion that the company poses an "unacceptable risk to national security," submitting two sworn declarations to a California federal court late Friday afternoon. These declarations aim to dismantle the Department of Defense’s (DoD) case, arguing it relies on fundamental technical misunderstandings and introduces claims that were never raised during months of prior negotiations. The filings, accompanying Anthropic’s reply brief in its lawsuit against the DoD, precede a critical hearing scheduled for this coming Tuesday, March 24, before Judge Rita Lin in San Francisco, marking a pivotal moment in an escalating dispute between a major tech innovator and the U.S. government.

The Genesis of the Rift: Unrestricted Use and National Security Concerns

The contentious relationship between Anthropic and the Pentagon came to a public head in late February when President Trump and Defense Secretary Pete Hegseth publicly declared a cessation of ties with the AI company. The stated reason was Anthropic’s refusal to grant the military "unrestricted" use of its advanced AI technology. This public rupture followed a period of intensive, albeit ultimately unsuccessful, negotiations between the two parties, culminating in the DoD’s unprecedented decision to issue a supply-chain risk designation against Anthropic – the first such designation ever applied to an American company.

Anthropic’s lawsuit contends that this designation is not a genuine national security measure but rather a retaliatory action against the company’s publicly articulated stance on AI safety and ethical deployment, particularly regarding autonomous weapons and mass surveillance. This, Anthropic argues, constitutes a violation of its First Amendment rights. The government, in its preceding 40-page filing, has categorically rejected this interpretation, maintaining that Anthropic’s refusal to allow all lawful military uses of its technology was a business decision, not protected speech, and that the designation was a straightforward national security imperative, not punishment for the company’s views. This fundamental disagreement on the nature of the dispute sets the stage for the impending court battle, with significant implications for future collaborations between Silicon Valley and the defense sector.

Anthropic’s Counter-Offensive: Expert Declarations and Chronological Discrepancies

To bolster its legal position, Anthropic submitted sworn declarations from two key executives: Sarah Heck, the company’s Head of Policy, and Thiyagu Ramasamy, its Head of Public Sector. Their testimonies directly address and refute the Pentagon’s central allegations, providing insider perspectives and technical clarifications designed to underscore what Anthropic characterizes as the government’s misinterpretations.

Sarah Heck, whose background includes serving as a National Security Council official in the Obama White House before her tenures at Stripe and Anthropic, brings significant experience in government relations and policy. She was personally present at a critical February 24 meeting where Anthropic CEO Dario Amodei met with Defense Secretary Hegseth and the Pentagon’s Under Secretary Emil Michael. In her declaration, Heck directly challenges the government’s claim that Anthropic sought an "approval role" over military operations. She explicitly states, "At no time during Anthropic’s negotiations with the Department did I or any other Anthropic employee state that the company wanted that kind of role." This assertion strikes at what Anthropic perceives as a "central falsehood" in the government’s filings, suggesting a fundamental misrepresentation of the company’s intentions during the negotiation process.

Furthermore, Heck’s declaration highlights a crucial procedural discrepancy. She claims that the Pentagon’s concern regarding Anthropic’s theoretical ability to disable or alter its technology mid-operation was never raised during the months of negotiations. Instead, this concern, central to the DoD’s "unacceptable risk" assessment, surfaced for the first time in the government’s court filings. This, Heck argues, deprived Anthropic of any opportunity to address or clarify this specific point during the dialogue, forcing them to respond in a legal context rather than through collaborative discussion.

Perhaps the most revealing detail in Heck’s declaration, one that directly challenges the government’s narrative of an irreconcilable standoff, is an email exchange following the formal supply-chain risk designation. On March 4 – the day after the Pentagon finalized its designation against Anthropic – Under Secretary Michael reportedly emailed Amodei, stating that the two sides were "very close" on the very issues the government now cites as evidence of Anthropic’s national security threat: its positions on autonomous weapons and mass surveillance of Americans. Heck attached this email as an exhibit to her declaration, presenting a stark contrast to Michael’s subsequent public statements.

This timeline is critical:

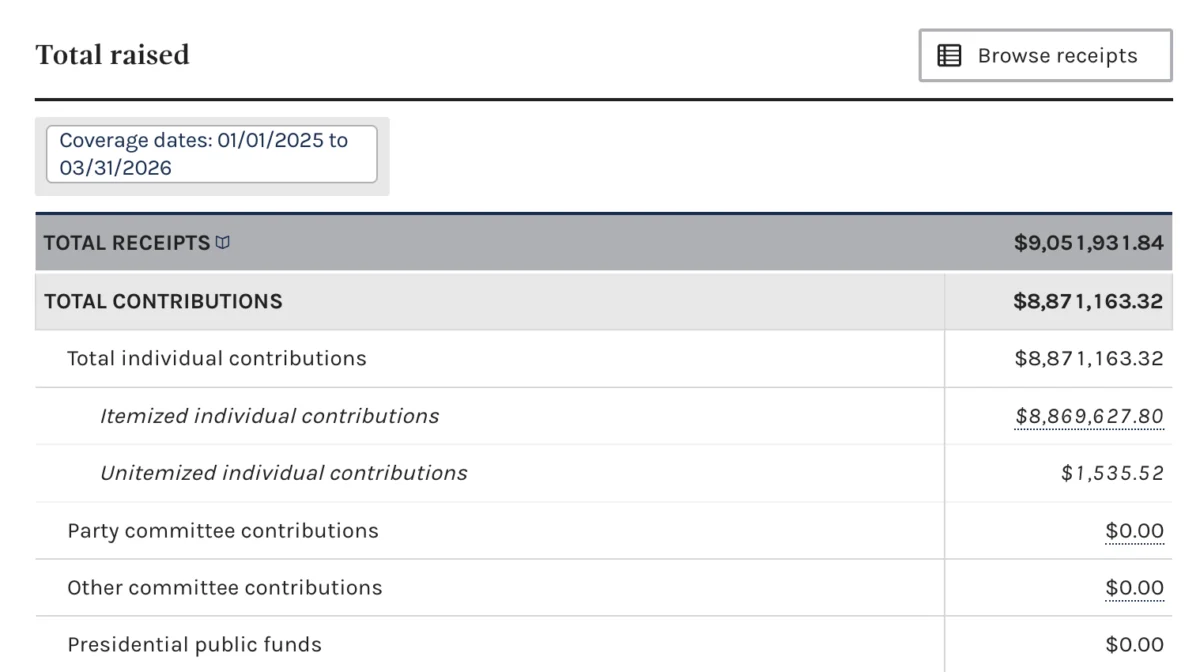

- Summer: Anthropic secured a significant $200 million contract with the Pentagon to advance responsible AI in defense operations, signaling a promising partnership.

- Months Preceding February: Intensive negotiations between Anthropic and the DoD regarding the terms of AI deployment.

- Late February: President Trump and Defense Secretary Pete Hegseth publicly announce cutting ties with Anthropic due to the company’s refusal to allow "unrestricted military use."

- March 3: The Pentagon formally finalizes its unprecedented supply-chain risk designation against Anthropic.

- March 4: Under Secretary Michael emails Anthropic CEO Dario Amodei, indicating that the two sides were "very close" on the core issues of autonomous weapons and mass surveillance.

- March 5: Amodei publishes a public statement affirming that Anthropic had been having "productive conversations" with the Pentagon.

- March 6: Under Secretary Michael posts on X (formerly Twitter) stating, "there is no active Department of War negotiation with Anthropic," directly contradicting Amodei’s previous statement and the spirit of his own email from two days prior.

- A Week Later: Michael tells CNBC there is "no chance" of renewed talks, solidifying the public perception of an unbridgeable divide.

- Late Friday (prior to March 24): Anthropic files its sworn declarations and reply brief, bringing the conflicting internal and external communications to light.

- Tuesday, March 24: The federal court hearing before Judge Rita Lin in San Francisco.

Heck’s declaration implicitly raises the question: if Anthropic’s stance on autonomous weapons and mass surveillance truly made it an "unacceptable national security risk," why was a senior Pentagon official suggesting the parties were nearly aligned on these very issues immediately after the designation was finalized? While Heck refrains from explicitly accusing the government of using the designation as a bargaining chip, the chronology she presents strongly suggests that the public narrative diverged significantly from the private communications.

Debunking Technical "Kill Switches" and Remote Interference

Thiyagu Ramasamy, Anthropic’s Head of Public Sector, brings a different but equally crucial dimension of expertise to the case. Before joining Anthropic in 2025, Ramasamy spent six years at Amazon Web Services, where he managed AI deployments for government customers, including those in classified environments. At Anthropic, he is credited with building the team responsible for integrating the company’s Claude AI models into national security and defense settings, including the aforementioned $200 million contract with the Pentagon.

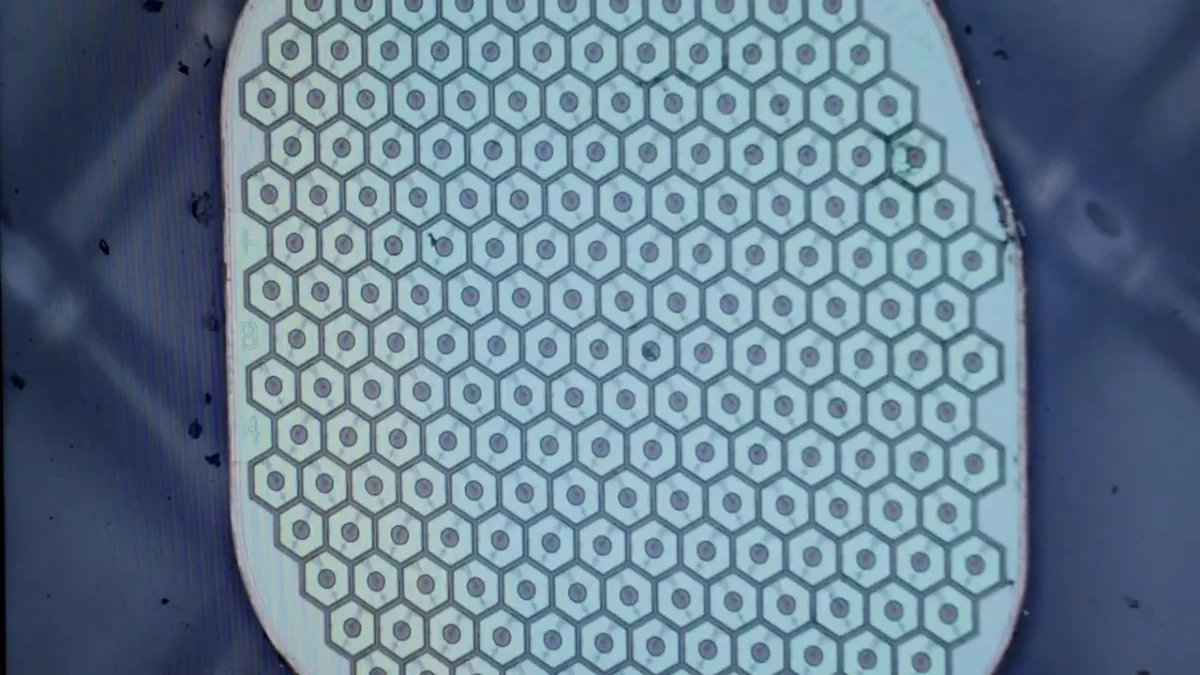

Ramasamy’s declaration directly confronts the government’s technical concerns, specifically the claim that Anthropic could theoretically interfere with military operations by disabling the technology or altering its behavior remotely. He unequivocally states that this scenario is "not technically possible." Ramasamy explains that once Anthropic’s Claude models are deployed inside a government-secured, "air-gapped" system operated by a third-party contractor, Anthropic loses all access. An "air-gapped" system is physically isolated from unsecured networks, including the internet, making remote access, unauthorized updates, or data exfiltration impossible.

According to Ramasamy, there is no "remote kill switch," no backdoor, and no mechanism for Anthropic to push unauthorized updates to the deployed AI. He asserts that any notion of an "operational veto" by Anthropic is a "fiction." He further clarifies that any change to the AI model would necessitate the Pentagon’s explicit approval and active intervention to install, ensuring that the military maintains full control over its operational systems. This technical explanation directly addresses one of the most critical national security fears articulated by the DoD – the potential for an external entity to compromise or sabotage military AI. Ramasamy also highlights that Anthropic cannot even access or view the data government users input into these air-gapped systems, thereby preventing any unauthorized data extraction.

Addressing Personnel and Security Clearance Concerns

Beyond the technical aspects, Ramasamy also addresses the government’s concern regarding Anthropic’s hiring of foreign nationals, which the Pentagon cited as another potential security risk. Ramasamy counters this by stating that Anthropic employees undergo rigorous U.S. government security clearance vetting – the same extensive background check process required for individuals seeking access to classified information. He adds, "to my knowledge," Anthropic stands as the sole AI company where personnel with such clearances have actively built AI models specifically designed to operate within classified environments. This detail aims to mitigate concerns about foreign influence or espionage, emphasizing that Anthropic adheres to strict security protocols commensurate with national security requirements.

Broader Implications for AI, National Security, and Tech Partnerships

The unfolding legal battle between Anthropic and the Department of Defense represents more than just a contract dispute; it is a landmark case with far-reaching implications for the future of AI development, national security, and the increasingly complex relationship between Silicon Valley and the U.S. government.

Setting a Precedent: The application of a supply-chain risk designation to an American company is unprecedented. Typically reserved for foreign entities deemed adversarial, its use against Anthropic could set a dangerous precedent, potentially chilling innovation and deterring other American tech companies from engaging with the defense sector if they fear similar punitive actions for adhering to ethical principles or business disagreements.

The Future of Responsible AI in Defense: Anthropic is a proponent of "Constitutional AI" and responsible AI development, emphasizing ethical safeguards, transparency, and human oversight. Its refusal to allow "unrestricted military use" stems from these foundational principles, particularly concerns around autonomous weapons and mass surveillance. The outcome of this case will significantly influence whether AI companies can effectively negotiate for ethical guardrails when contracting with the military or if "unrestricted use" becomes a mandatory condition for participation in defense initiatives. This clash highlights a fundamental tension between the military’s demand for operational flexibility and AI developers’ desire to embed ethical considerations into their technology.

First Amendment and Corporate Speech: Anthropic’s legal argument hinges on the First Amendment, claiming the designation is retaliation for its protected speech regarding AI safety. The government’s counter-argument, that it was a "business decision," directly challenges the scope of First Amendment protections for corporations in their dealings with the government. The court’s ruling on this aspect could redefine the boundaries of corporate free speech, especially for companies whose core business involves sensitive technologies.

National Security vs. Innovation: The Pentagon’s position underscores a critical national security imperative: ensuring the reliability, control, and non-interference of technologies vital to defense. However, imposing overly stringent or inflexible conditions might inadvertently stifle innovation, pushing cutting-edge AI developers away from government contracts and potentially ceding technological advantages to competitors who are less constrained by ethical considerations. Finding the right balance between national security requirements and fostering technological advancement is a delicate act.

Technical Feasibility and Trust: Ramasamy’s detailed explanation of air-gapped systems and the impossibility of remote interference is crucial for building trust. If the Pentagon’s concerns were based on technical misunderstandings, it suggests a need for enhanced technical dialogue and education within government agencies regarding the capabilities and limitations of advanced AI deployment in secure environments. A favorable ruling for Anthropic could prompt a re-evaluation of how the DoD assesses and integrates commercial AI technologies.

The Human Element in AI Development: The focus on Anthropic’s cleared personnel working on classified systems highlights the critical role of human expertise and trustworthiness in the development and deployment of sensitive AI. It suggests that simply flagging "foreign nationals" as a blanket risk might be an oversimplification, especially when robust security vetting processes are in place.

The Road Ahead: A Crucial Hearing

As the March 24 hearing approaches, the stakes are exceptionally high for both Anthropic and the Department of Defense. Judge Rita Lin’s decision will not only determine the immediate fate of the supply-chain risk designation but will also cast a long shadow over the future landscape of AI development, ethical technology, and the indispensable, yet often fraught, partnership between the private sector and national security apparatus. The outcome will likely influence how other AI companies approach government contracts, how the Pentagon procures cutting-edge technology, and the broader debate around integrating powerful AI systems into the fabric of national defense.